The Rapid Rise of Enterprise AI and What It Means to You

Enterprise AI is rapidly redefining how organizations achieve their most critical outcomes, from accelerating revenue growth to enhancing customer experiences at scale. GenAI represents a transformative force that is reshaping digital infrastructure, amplifying workforce productivity, and unlocking new levels of innovation, particularly when deployed intelligently at the endpoint.

The Future of Work: Why IT Leaders are Turning to AI PCs

AI PCs Turn Endpoints into High-Impact AI Engines

AI PCs are no longer standard desktops or laptops, they are next-generation productivity and intelligence hubs:

- Capable of on-device AI inference, GenAI tasks, and data analytics

- Purpose-built with AI-optimized CPUs, GPUs, and NPUs

- Support use cases like cybersecurity, local model execution, IT task automation, and video processing

80%

of organizations say AI PCs have accelerated

their endpoint refresh cycle

55%

of organizations cite employee productivity as the top priority driving endpoint strategy

Tangible Business Outcomes: Productivity, Security, and TCO

Investing in AI PCs offers measurable value across key business goals:

| Objective | AI PC Impact |

| Employee Productivity | Personalized GenAI assistants reduce time on routine tasks and boost creative output. |

| Cybersecurity | Local AI models enhance threat detection and response, even in air-gapped or low-bandwidth settings. |

| Cost Optimization | Reduces server load, lowers bandwidth use, and avoids overreliance on subscription-based AI services. |

Hybrid AI is Here, and AI PCs Make it Work

AI deployments are rapidly evolving into hybrid models that blend cloud-based large language models (LLMs) with edge and endpoint-based AI. According to ESG, 40% of GenAI workloads are now being deployed on-premises, at the edge, or directly on AI PCs. This shift is driven by the need for reduced latency, stronger compliance with data sovereignty requirements, and better cost predictability.

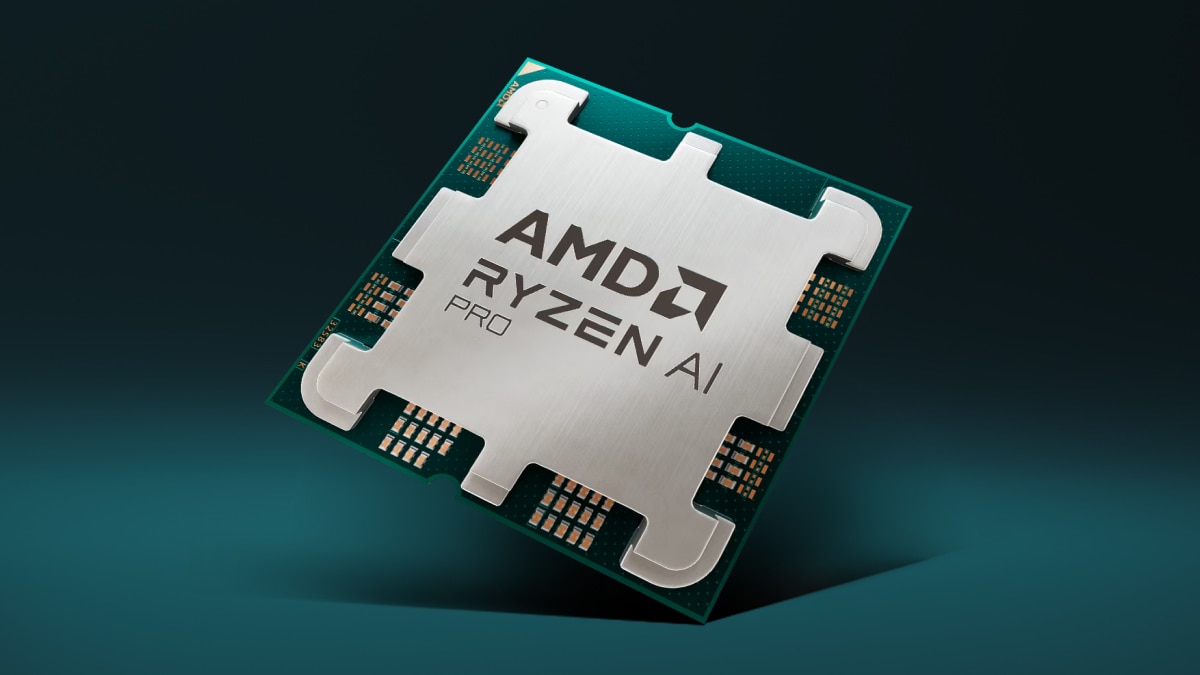

The AMD AI technology stack, including Ryzen™, EPYC™, Instinct™, and Radeon™ helps to support a full spectrum of hybrid-infrastructure strategies, providing IT leaders with the agility to scale AI deployments flexibly and securely without being confined to cloud-only architecture.

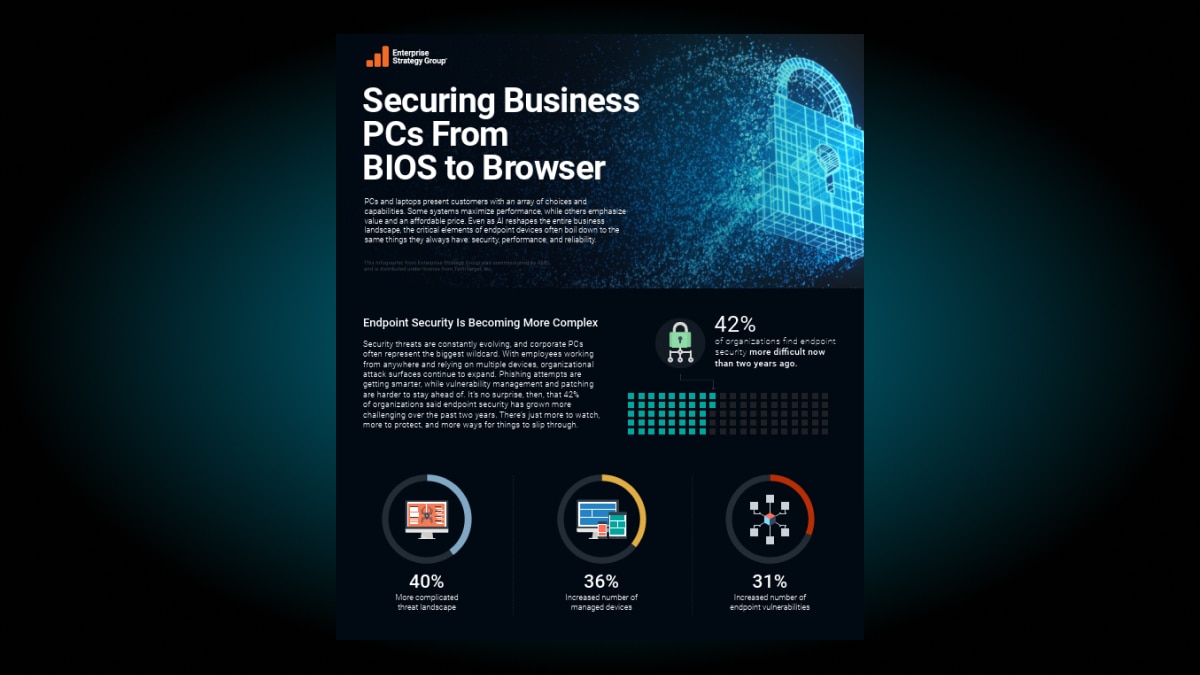

Security and Reliability without Compromising Performance

Today’s AI PCs are engineered with enterprise-grade security features that make them ideal for mission-critical workloads. AMD PRO Technologies helps protect businesses with a complete set of security features, robust manageability tool, and enterprise-grade stability and reliability. For organizations operating in regulated industries or handling sensitive data, deploying AI at the edge via secure AI PCs dramatically improves compliance posture, safeguards privacy, and ensures operational sovereignty without sacrificing performance.

How AMD Makes AI Adoption Low Risk

Investing in AMD-powered AI PCs aligns your organization with a future-proof, open, and cost-effective AI roadmap. AMD AI solutions offer performance advantages, collaboration with all major OEMs, and support for open AI ecosystems.

AMD Ryzen PRO processors with Ryzen AI are high-performance components with intelligent power gating optimized for multitasking workloads that must “travel” with their users. Ryzen PRO processors include a dedicated AI engine, enabling AI PCs to run at very high performance but with optimal cost and energy efficiency.

Resources

Enterprise AI Solutions

AMD Technology Blogs

Get Started with an AI Business PC

Need more information about an AMD commercial product for your business? Fill out the form below to get in touch with an expert and find out what AMD can do for your organization.

Footnotes

- As of May 2023, AMD has the first available dedicated AI engine on an x86 Windows processor, where 'dedicated AI engine' is defined as an AI engine that has no function other than to process AI inference models and is part of the x86 processor die. For detailed information, please check: https://www.amd.com/en/technologies/xdna.html. PHX-3a

- As of May 2023, AMD has the first available dedicated AI engine on an x86 Windows processor, where 'dedicated AI engine' is defined as an AI engine that has no function other than to process AI inference models and is part of the x86 processor die. For detailed information, please check: https://www.amd.com/en/technologies/xdna.html. PHX-3a