Beyond the Hype: Turning AI PC Potential Into Economic Reality

Apr 23, 2026

Across the enterprise technology landscape, a recurring theme has emerged over the past year. The initial wave of generative AI hype has crested, and organizations are now facing the sober, complex reality of deployment. The question is no longer whether AI will transform the enterprise, but how to design and resource an infrastructure strategy that turns AI’s potential into measurable economic value with minimal disruption.

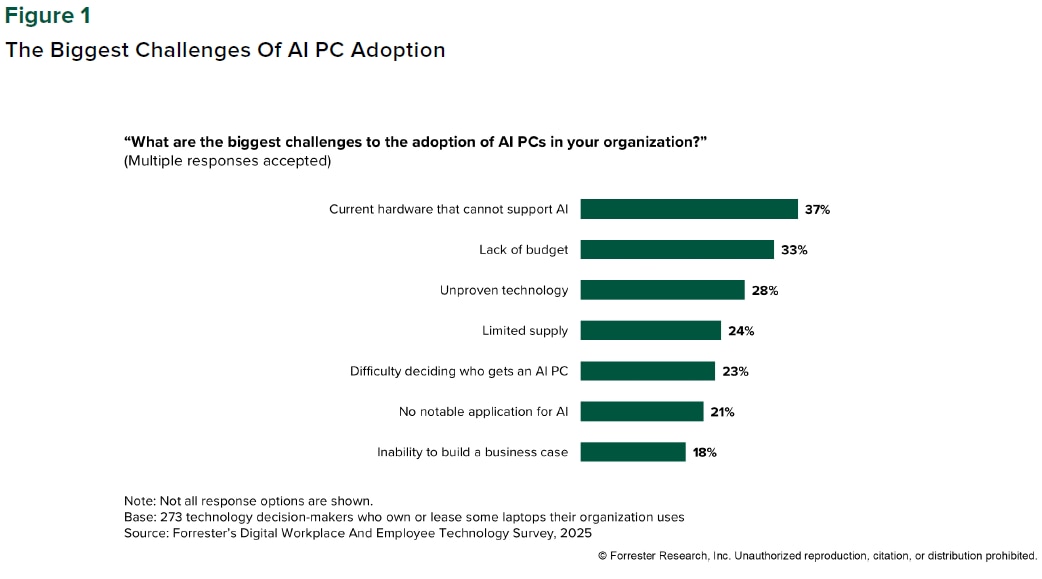

A recent Forrester report, Workplace CPUs In Transition: Should You Invest In AI PCs?, explores the local aspect of this question by surveying enterprise attitudes towards AI PCs, how ITDMs and system administrators perceive the added value these systems deliver, and what long-term adoption trends are likely to look like.

The impulse to adopt a wait-and-see attitude towards AI is both understandable and potentially disadvantageous in ways that could impact a company's bottom line for years to come. The AI PC represents the critical edge of a much larger computational continuum that spans local, hybrid, and pure cloud configurations. Successful organization-wide AI deployments require executives to think about end-to-end AI ecosystems from this perspective rather than considering each piece in isolation.

Layering the Proverbial Cake

Enterprise customers may be curious about the future of AI PCs, but they aren’t willing to sacrifice decades of stability and compatibility just to run AI workloads on a GPU or NPU. Enterprise customers may be curious about the future of AI PCs, but they aren't willing to sacrifice decades of stability and compatibility just to run AI workloads on a GPU or NPU. The approach AMD takes towards AI doesn't ask them to. In fact, it's designed to help IT decision-makers address common challenges, like those shown in Figure 1 below

AMD PRO processors begin with the long-proven x86 architecture before adding support for the AVX-512 instruction set to improve the CPU’s local AI execution. Security and manageability capabilities are added to the proverbial cake next, to ensure enterprise IT teams can take advantage of hardware-backed protections and the full suite of included manageability features. AMD PRO CPUs use the open DMTF DASH standard, with support for wireless networking via AIM-T (AMD Integrated Management Technology), and provide equal deployment times and equivalent features compared to competitive solutions, as well as support for multiple wired and wireless vendors.

GPUs are where many local AI workloads run today, and AMD PRO processors include powerful integrated graphics solutions based on the AMD RDNA™ 3.5 architecture to ensure AI applications have access to the compute power they need. The onboard NPU completes the equation by providing a low-power, highly efficient processor that can run AI workloads with optimal efficiency, improving system responsiveness and freeing the CPU and GPU to handle other tasks. Finally, all AMD Ryzen™ AI PRO processors, from budget-oriented processors to high-end hardware feature an NPU capable of between 50 and 60 TOPS.1

Deploying cohesive processor architectures with a common set of standards, like AVX-512, a single NPU design shared across multiple products, and an open software platform such as AMD ROCm™ helps IT leaders place workloads where they make economic sense. Data movement is computationally expensive – it accounts for a non-trivial percentage of a CPU or GPU’s total power expenditure in any given workload – so placing work where it runs most efficiently is a way of reducing power consumption and resource utilization.

Taken in aggregate, the strengths of AMD PRO processors address many of the AI adoption roadblocks Forrester identified above. Choosing to standardize a common NPU design across many different Ryzen AI PRO processors gives budget-conscious companies more flexibility to adopt AI at every price point and avoids forcing enterprise buyers into higher product tiers just to achieve reasonable AI performance. The difficulty of deciding who receives an AI PC is much easier to resolve when every PC you deploy ships with a capable NPU. Even limited system supply is less of an issue when you work with a company that promises professional products will be supported in-market for years at a time -- and AMD does.

Conclusion

The Forrester report linked above recommends a range of practical steps enterprises can take to improve AI deployments, including scenario-based planning, aligning refresh cycles to major market evolutions, and mapping employee personas to the tasks they perform to assess the level of AI support different roles will need.

AMD has commissioned persona-based research projects with companies like Principled Technologies and Signal65 to better understand how much time different types of employees can save with AI in an ordinary week. The findings across the four initial personas – tech experts, road warriors, knowledge workers, and power users – consistently point towards hundreds of hours saved over time, even when use cases and evaluated applications differ.

While the specifics will vary depending on each individual customer’s needs, AI PCs will be an important part of most, if not all, successful corporate AI strategies in the long term. The best way to move AI from a novel curiosity to a load-bearing part of the business is to start with real-world use cases gathered from the employees who will initially test your chosen AI models and strategies. Gather data on what works (and what doesn't), and adjust the overarching strategy accordingly.

The market is still in transition, that much is obvious. But by focusing on the continuum of compute, preserving x86 compatibility, prioritizing silicon-level security, and championing open ecosystems, AMD is building a foundation customers can rely on to advance their AI goals. The broader mission is to help enterprises move past the hype and build AI infrastructure that delivers real, enduring, and sustainable business value."

1 - Trillions of Operations per Second (TOPS) for an AMD Ryzen processor is the maximum number of operations per second that can be executed in an optimal scenario and may not be typical. TOPS may vary based on several factors, including the specific system configuration, AI model, and software version. GD-243.