Next Gen Networking Transport for Large Scale AI Training

May 06, 2026

Authored by Krishna Doddapaneni

What are the demands of today’s large language model AI training workloads?

To feed the some of world’s most powerful large language models with sufficient resources, organizations need the compute power and networking infrastructure to handle more than 10 trillion parameters behind these AI models. Training these computationally intensive workloads doesn’t just need hundreds of thousands of GPUs working an endless number of days, but also a critical network fabric that can easily handle networking issues like congestion and GPU downtime.

In the face of such challenges, modern AI companies can face challenges when they rely on traditional networks like RoCEv2 (RDMA over Converged Ethernet) which often lack the functionality to keep AI training workloads running at high speed for months.

To provide a solution, AMD in collaboration with OpenAI and Microsoft and other industry leaders has developed the specificaton behind Multipath Reliable Connection (MRC), a new transport protocol . With MRC, providers of large language models can do large-scale AI model training with predictability, performance, and resilience across large scale AI training clusters.

In this post, we will discuss the challenges of traditional Ethernet, why models today need MRC, and how AMD has contributed to this leading-edge transport protocol.

Why Traditional Networks Often Fall Short - Challenges of RoCEv2

Ethernet-based networks are the most dominant form of networking in data centers today. While RoCEv2 has been successful used for many data center workloads, it sometimes falls short when faced with the trillion-parameter scale of large language models like ChatGPT.

First, traditional RoCEv2 doesn't fully utilize the GPU to GPU communication bandwidth needed for the demands of today’s major large language models. Large AI training models need multiple data paths between GPU nodes, but current protocols only support a single path per connection which prevent the use of ECMP and leaving networks and GPUs underutilized.

Another issue with RoCEv2 is the handling of network downtime. If a link flaps, a switch reboots, or an ECMP path goes out, the GPU-to-GPU communication running behind the model training can be disrupted, leading to longer training times and potential packet loss.

Packet loss is another issue that RoCEv2 doesn’t often handle in an efficient manner. Due to RoCEv2’s go-back-N mechanism, when a single packet is lost, the sending device doesn’t just resubmit that packet, but all subsequent packets in current retransmission window. This inefficiency causes additional unneeded data to be transferred, leading to large scale congestion events and additional overhead.

In response to congestion, traditional RoCEv2 attempts to create a lossless network with Priority Flow Control (PFC). PFC is commonly used to provide lossless behavior for RoCEv2, but at large scale its pause‑based behavior can contribute to head‑of‑line blocking, congestion spreading, and operational complexity.

While RoCEv2 can easily handle traditional non-AI workloads, it sometimes fails when meeting the demands of large scale AI training of today’s most in demand large language models.

MRC Benefits over RoCEv2 and Multi-plane Support

To help Ethernet fulfill the demands of large scale AI workloads, OpenAI has announced that in collaboration with AMD and other industry leaders have developed Multipath Reliable Connection (MRC). MRC delivers a variety of features that enable large-scale AI training.

With RoCEv2, packets were stuck in a single path from point A to point B, which contributes to congestion. To overcome this, MRC introduced Intelligent Packet-Spray Load Balancing, so that if a packet’s path is unusable, packets can traverse across other paths on the network. This can enable AI models to benefit from higher bandwidth utilization, reduced tail latency, and fine-grained load balancing at the packet level.

To address the issues with network downtime, MRC introduces intelligent path-failover and traffic rebalancing operations which all operate at the network interface card (NIC) level. These operations consist of automatic path failure detection, seamless traffic rerouting, fast path recovery, and minimal application impact, helping AI training continue to minimal network failure.

To address issues with the go-back-N based packet recovery with RoCEv2, MRC introduces Selective Acknowledgement (SACK) and Negative Acknowledgement (NACK) which only retransmit the lost packets, instead of the whole window. This can lead to faster recovery times with reduced network overhead and better support for out-of-order packet delivery.

MRC also introduces Network-Signaled Congestion Control (NSCC), which is based on the Ultra Ethernet Consortium (UEC) 1.0 specification. NSCC is a helpful improvement over PFC for large-scale AI training which allows packets to take advantage of path-aware sender-based control so congestion happens at the path level rather than at a network level.

Furthermore, NSCC also provides Round Trip Time (RTT)-aware window control that adjusts packet sending rates based on round-trip travel times. These features help reduce the complexity of congestion control, improve throughput while minimizing packet delay and loss, and distribute resource allocation evenly across multiple network sending devices.

While MRC addresses many of RoCEv2’s transport limitations, modern AI clusters rely on multi-plane architectures to scale to tens or hundreds of thousands of GPUs. By distributing traffic across multiple independent network fabrics, these designs increase aggregate bandwidth, improve redundancy, and enable simpler two-tier topologies.

MRC is built to fully support multi-plane networks by dynamically distributing traffic across planes and paths. This allows clusters to scale efficiently with lower complexity and reduced cost, while built-in failover ensures traffic shifts to healthy paths in real time, maintaining consistent performance even during network disruptions.

AMD Contributions to MRC

To deliver an open Ethernet based protocol, AMD has been involved from the beginning.

AMD has played a central role in shaping the MRC protocol, working alongside industry leaders including OpenAI, Microsoft, Broadcom, Intel and others to define a specification grounded in real-world AI deployment challenges. This collaborative approach ensures that MRC is not only technically robust, but also aligned with the needs of the largest and most demanding training environments.

Building on this foundation, AMD authored the NSCC congestion control algorithm, now defined in the UEC Congestion Control specification and serving as a defined congestion management mechanism in MRC. This contribution addresses one of the most complex challenges in scale-out networking which is efficiently managing congestion at massive scale.

In addition, AMD developed the IB/RDMA transport semantic layer extensions for MRC, enabling seamless integration with existing RDMA programming models while introducing the multipath capabilities that distinguish MRC from traditional transports. This allows AI frameworks to benefit from MRC’s performance and resiliency improvements without requiring significant changes to existing code.

Production-Ready Implementations and Deployment Flexibility

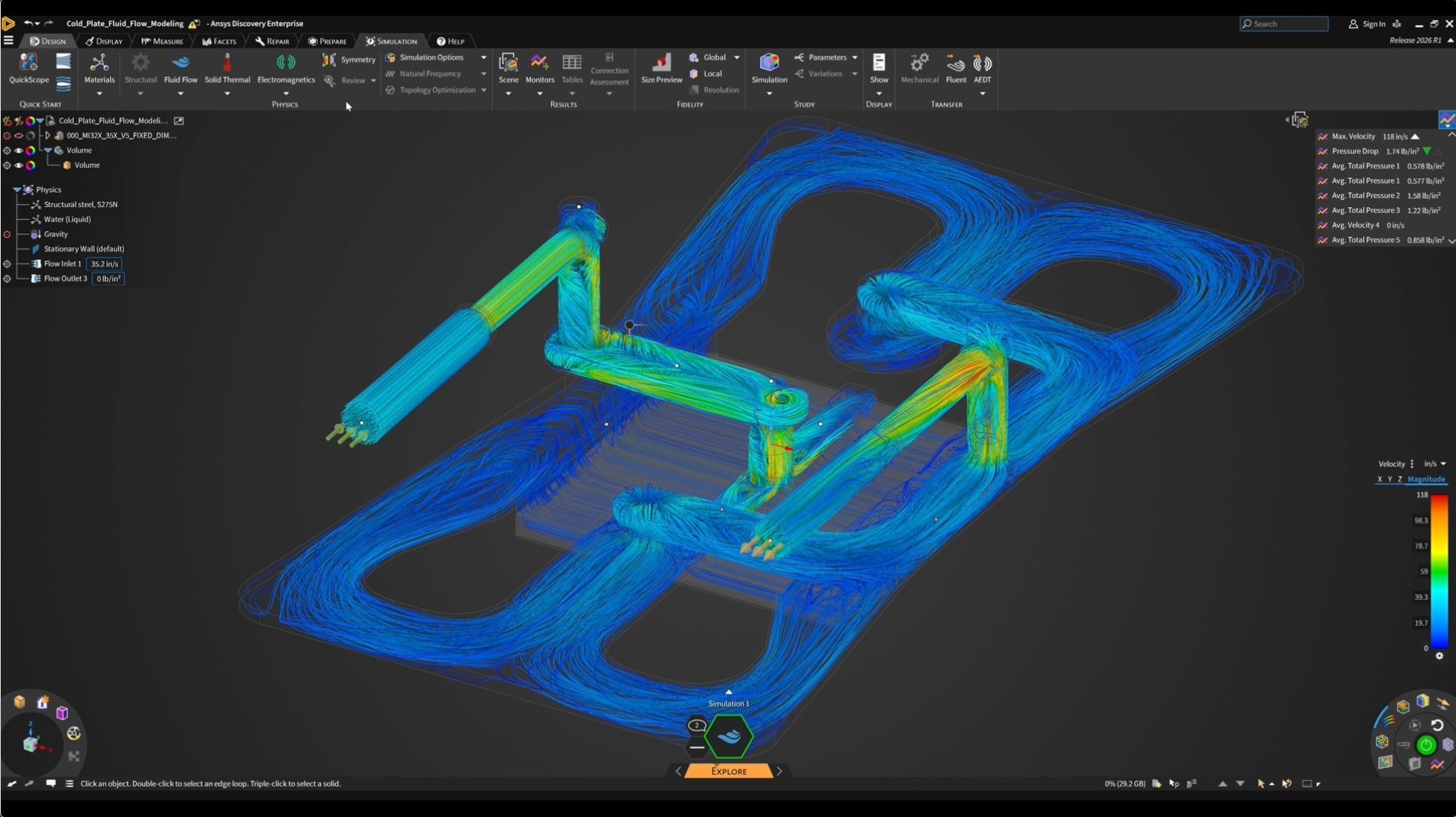

AMD has implemented the MRC transport protocol and congestion control across two generations of AI-optimized ASICs, bringing the transport protocol from specification to real-world deployment. The first-generation AMD PensandoTM Pollara 400 AI NIC has been validated at scale in AMD labs with AMD InstinctTM MI350 and MI355 GPU clusters, co-validated with OpenAI. This collaboration with one of the world’s leading AI research organizations demonstrates MRC’s readiness for the most demanding workloads.

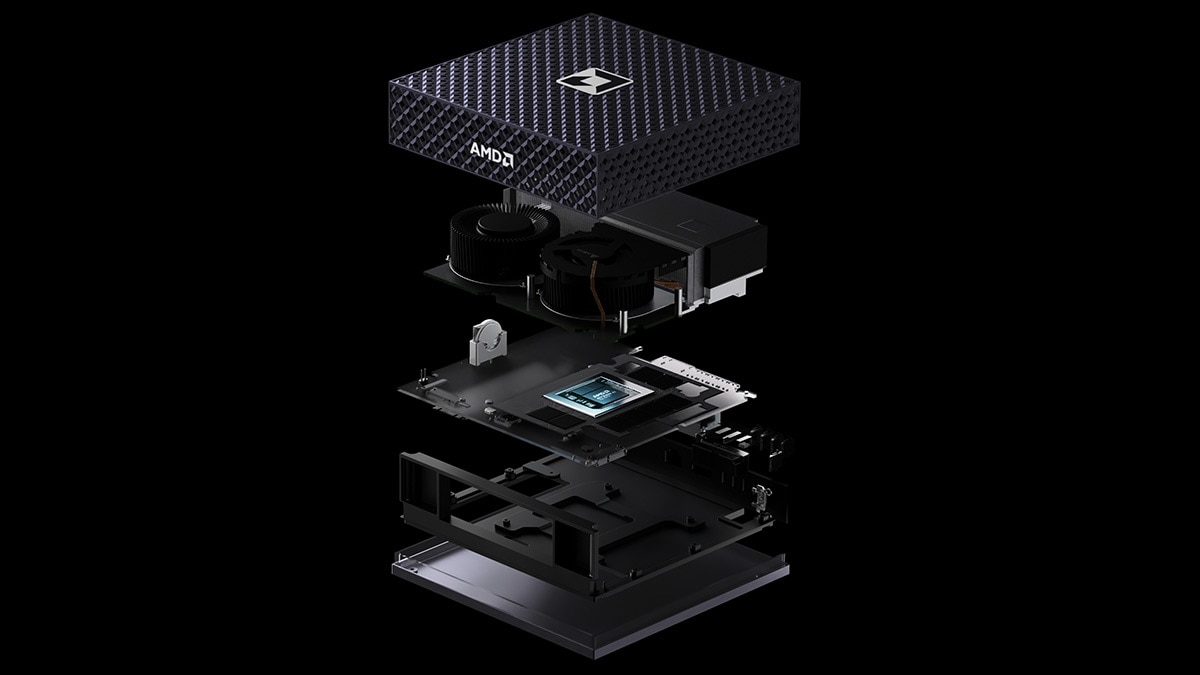

Building on this foundation, the second-generation AMD Pensando “Vulcano” 800 AI NIC is currently being qualified for AMD InstinctTM MI400 Series GPU clusters. It supports multi-plane backend configurations such as 4×200G and 8×100G, delivering twice the bandwidth of the previous generation while maintaining the same advanced MRC capabilities. With support for multiple NICs per GPU, “Vulcano” enables scale-out bandwidth of up to 2.4 Tbps per GPU, addressing the needs of large-scale AI training deployments.

The MRC implementation from AMD is also designed for flexibility across diverse network environments. It supports both SRv6-based forwarding, which enables explicit path control and fine-grained traffic engineering, and traditional ECMP or dynamic load balancing approaches. This allows organizations to deploy MRC within existing infrastructures while also adopting more advanced routing models as needed.

AMD is committed to making MRC broadly available to the AI ecosystem, with general availability planned for the AMD Pensando “Vulcano” 800 AI NIC platforms. Also, even though the AMD Pensando Pollara 400 AI NIC was designed before the creation of the MRC specification, it fully support MRC which demonstrates the ability of AMD to design an AI NIC architectures that is future proof.

Positioned as a scale-out transport for AI training clusters, MRC is supported by ongoing collaboration with organizations looking to deploy next-generation AI infrastructure at scale.

Deploy MRC Today

Today’s large language model training workloads place unprecedented demands on data center infrastructure, requiring not only massive GPU scale but also highly efficient, resilient, and intelligent networking. Traditional solutions like RoCEv2, while effective for conventional workloads, struggle to meet these requirements due to limitations in path utilization, congestion handling, and fault tolerance.

Multipath Reliable Connection (MRC) addresses these challenges by rethinking how data moves across AI clusters. Its ability to fully leverage multi-plane architectures further ensures that modern AI clusters can scale efficiently while maintaining consistent throughput, even in the face of network disruptions.

Contributions from AMD, from shaping the protocol alongside industry leaders to delivering production-ready implementations with AMD AI NICs, demonstrate a clear commitment to advancing open, Ethernet-based solutions for AI at scale. These innovations not only improve performance today but also lay the groundwork for the next generation of AI infrastructure.

As the pace of AI innovation accelerates, organizations must rethink their networking strategies to stay competitive. Now is the time to explore how MRC can transform your AI training environment, enabling faster time to insight, better resource utilization, and more resilient operations. Connect with AMD to learn how you can adopt MRC and build a future-ready AI infrastructure and or read more in the associated research paper.