Ansys Discovery on AMD Graphics: Jointly Scaling the Memory Wall

May 05, 2026

As demand for AI compute increases, enterprise engineers must balance the desire for higher AI performance against the weight, heat, and electrical demands of these rapidly advancing systems. Engineers have a variety of resources to aid them in this task, and simulation software such as Ansys Discovery sits at the heart of much of their work.

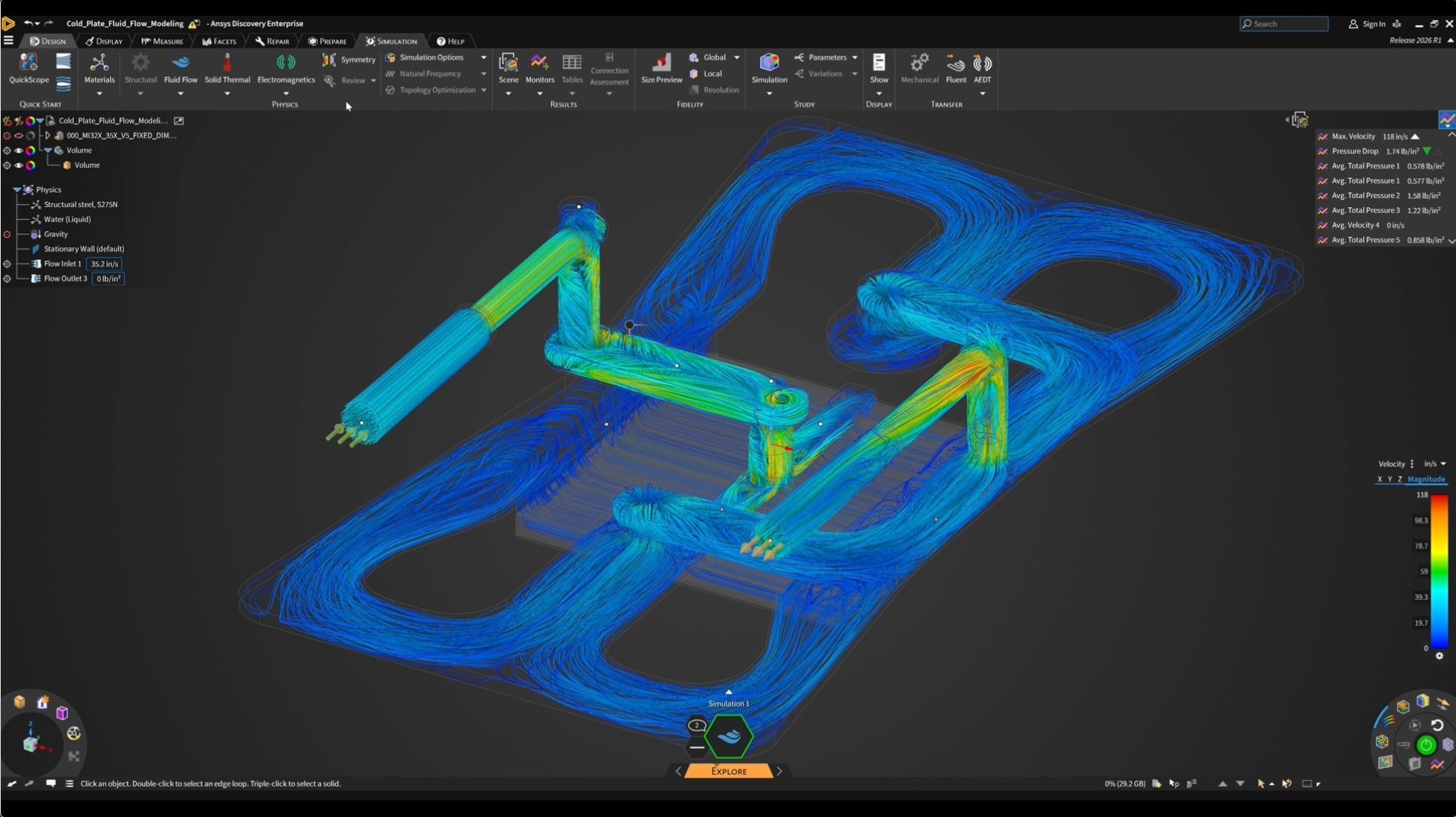

Ansys Discovery combines real-time physics simulation and geometry modeling (Explore) with a high-fidelity simulation option when precision is more important than a real-time environment (Refine). The goal of Ansys Discovery is to move simulation to an earlier point in the product design cadence, allowing engineers to test ideas and evaluate proposed changes without waiting on a slower, more conventional CAE (Computer Assisted Engineering) approach.

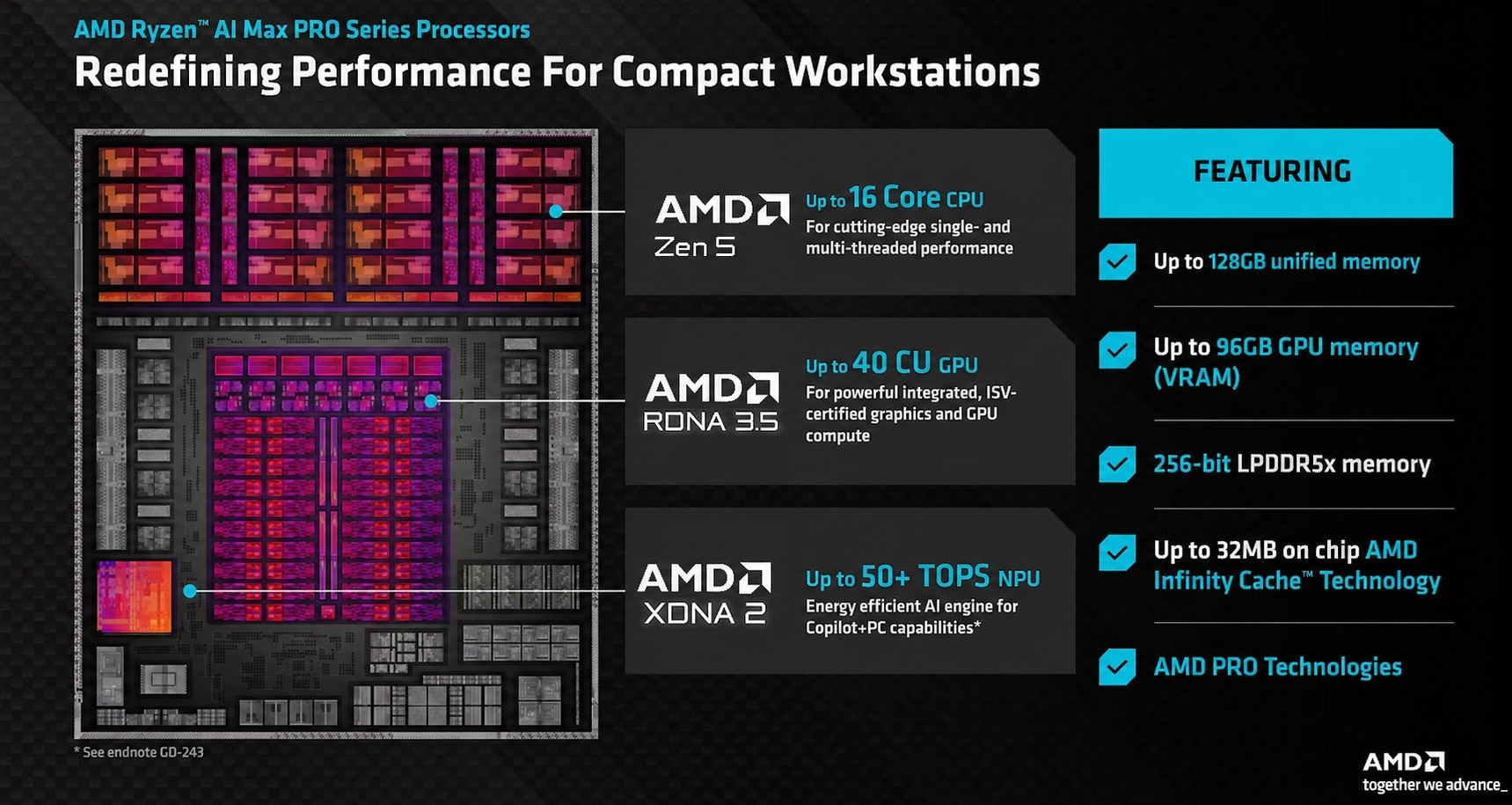

Ansys, a part of Synopsys, has been a leader in CAE and multi-physics engineering for decades, and the company has recently expanded its list of supported GPUs. As this whitepaper discusses, Ansys 2026 R1 has added support for the AMD Radeon™ GPUs integrated into all AMD Ryzen™ AI Max PRO processors, thanks to the AMD ROCm™ software platform and the HIP kernel programming language.

According to Roman Walsh, Product Manager for Discovery at Ansys, the memory demands of modern simulation work drove much of the motivation behind its additional GPU support. "For Discovery users, having workstation-level performance for their most demanding problems is a primary driver," Walsh says. "Formerly, high-end simulation meant costly graphics cards or remote machines, and most companies can't justify that expense for all workstations. We frequently see engineers running simulations that require 30-plus GB of GPU memory, but higher-capacity cards at a reasonable price point are not widely accessible. This is particularly apparent in electronics cooling, where the thin, small nature of the components demands exactly the kind of GPU capacity the AMD platform can now deliver."

As Walsh states, memory constraints have a significant impact on the types of simulations users can practically run. When models exceed available memory on a conventional discrete GPU, performance plummets as the system begins paging data from main memory across the relatively high-latency PCIe bus. Engineers have historically had two options: Simplify the model by stripping out geometry they would otherwise include for accuracy, or (if possible), wait for compute time on a more powerful cloud system.

AMD Ryzen™ AI Max PRO processors offer a third option.

Breaking the dGPU Memory Ceiling

AMD Ryzen™ AI Max PRO Series processors rebalances the typical tradeoffs semiconductor manufacturers make between CPU, GPU, and main memory and, in doing so, creates a solution unlike anything else on the market. Here’s how:

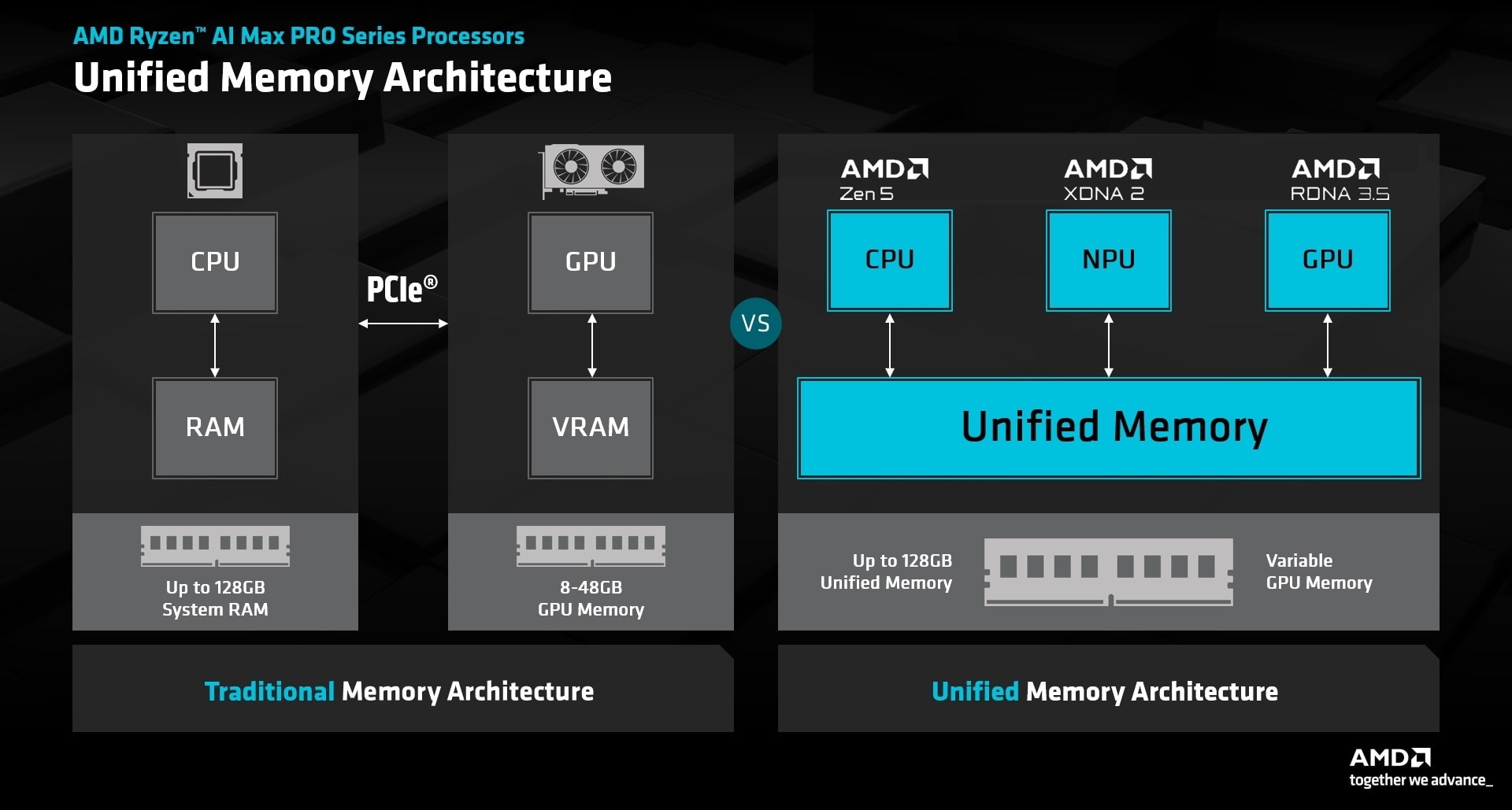

Traditional desktops and mobile workstations split CPU and GPU memory into separate pools of physically distinct memory, as shown on the left. Desktop CPUs rely on standards like LPDDR5X or DDR5 and can address 128GB of memory (or more), while an accompanying discrete GPU offers a separate, dedicated pool of VRAM (Video RAM).

The advantage of traditional memory architectures is that they ensure maximum memory bandwidth is provided to the GPU. The disadvantage of traditional architectures is the constraints they put on model sizes.

Exactly what happens when a model, 3D visualization, render, or assembly exceeds available graphics memory differs somewhat depending on the application, but the most typical outcomes are either an out-of-memory error message or an enormous performance hit as the GPU overruns its dedicated VRAM and attempts to fill its compute units by tapping the relatively high-latency PCIe bus. This type of paging can keep workloads running -- technically -- but it does scant favors for an engineer who needs a solution by tomorrow as opposed to next week.

AMD Ryzen™ AI Max and Max PRO processors avoid this potential bottleneck by sharing a unified pool of memory across the CPU, GPU, and NPU. The benefits of this approach include full cache coherency (and lower latency) between CPU and GPU, support for zero-copy operations, and the option to dedicate far more memory to the GPU than any traditional mobile memory architecture.

In addition to 16 desktop-class cores with full-width AVX-512 SIMD units and up to 2,560 GPU cores – more than twice as many as any other AMD iGPU – AMD Ryzen AI Max PRO Series processors offer up to 32MB of MALL (Memory Attached Last Level) cache. This purpose of such caches, in both CPUs and GPUs, is to relieve pressure on main memory bandwidth by providing a significant block of on-die memory where information can be quickly retrieved. While often discussed in gaming and consumer contexts, the advantages of this approach are not confined to entertainment. Professional applications can – and do – benefit as well.

These features help systems equipped with AMD Ryzen AI Max PRO Series processors punch above their weight class when it comes to complex simulations and real-time impact modeling. And boosting available performance is particularly important considering how many core assumptions about data center and cooling design need to be updated for the AI era.

AI Server Cooling Raises the Degree of Difficulty

The air cooling solutions that data centers have relied on for decades commonly combine an aluminum or copper heatsink with dedicated fans to move cold air across a system or throughout a rack. Air cooler requirements and designs are both well understood, but these systems are only effective to a certain point. As per-rack power consumption climbs, the heatsink + fan solutions that worked for 200 -400 watt solutions become impractical for AI accelerators that draw 750W or more. Just one AI accelerator can produce the same heat as a running blow dryer, if blow dryers were designed to run around the clock. Scaling up conventional coolers to meet this kind of cooling demand, even when technically possible, is expensive, heavy, electrically inefficient, and noisy. This is why engineers have increasingly turned to liquid cooling as a practical answer to an increasingly pressing problem.

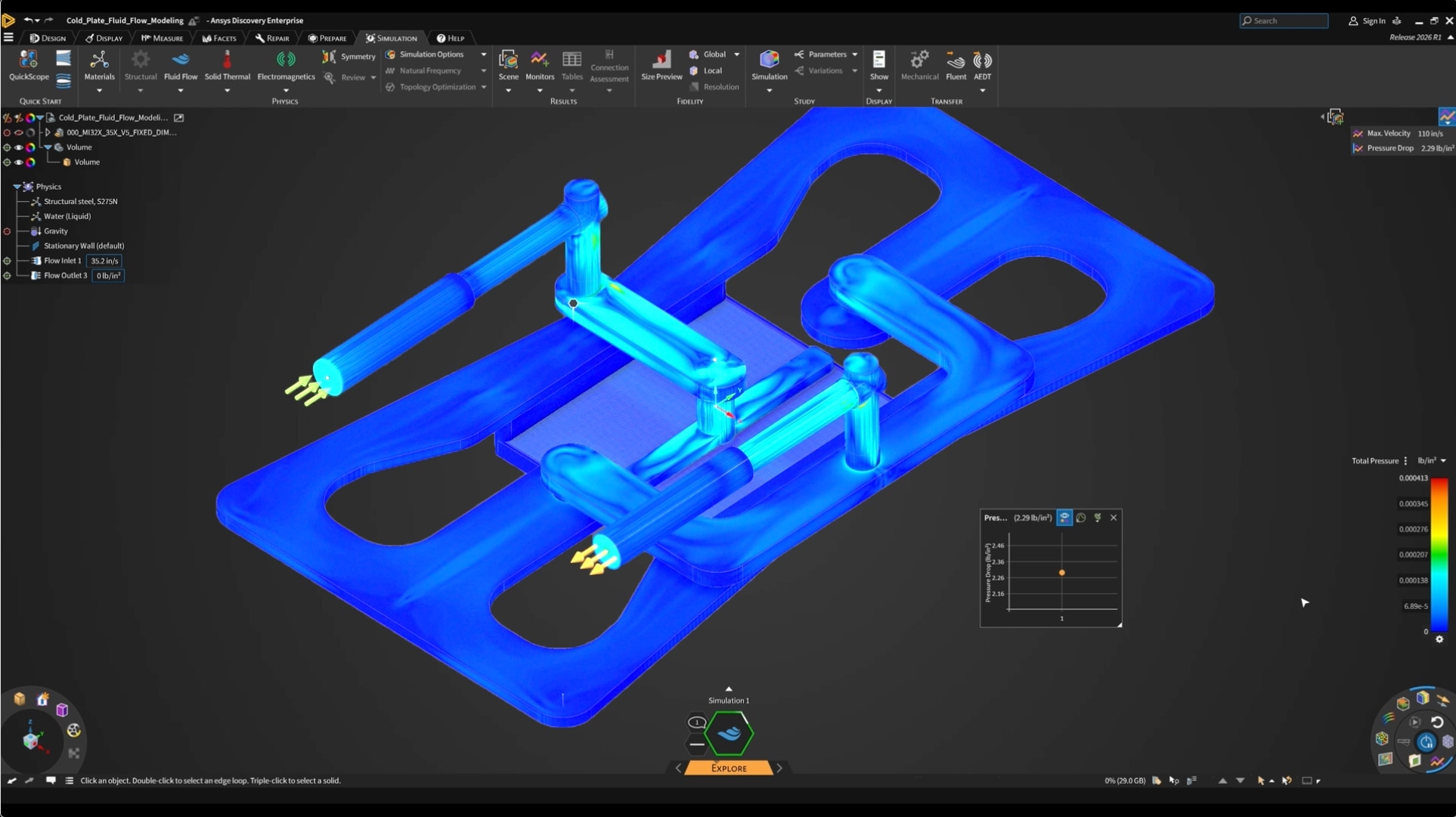

Instead of a heatsink and fan, liquid-cooled data centers use cold plates. These are precisely manufactured blocks of aluminum or copper with micro channels designed to address hot spots and overall thermal characteristics of the processors they cool. Because hot spots can constrain total chip performance, proper routing and flow rates are essential to delivering a solution that fully addresses the needs of the hardware it pairs with. Designing and simulating such systems prior to full manufacturing can save a company product delays and cost overruns as it races to fix previously unknown faults and problems.

Iterate Faster, Model More

According to AMD Systems Thermal Engineer and regular Ansys Discovery user Eurydice Kanimba, moving Ansys Explore from the CPU to the GPU and supporting AMD Ryzen AI Max PRO processors both pay tremendous dividends. "GPU-accelerated simulation has fundamentally changed how quickly we can make decisions," said Kanimba. "What used to take one to two hours on CPU now solves in minutes on GPU, allowing us to iterate, compare designs, and converge on solutions much faster. Advanced systems built with AMD Ryzen AI PRO processors provide the large memory footprint we need at a much lower cost, making advanced simulation more accessible.”

Ansys' goal in supporting AMD graphics solutions via AMD ROCm™ and HIP is to meet its customers where they are and to support the solutions they increasingly need today. In this context, AMD Ryzen AI MaxPRO processors fill a unique niche between the inadequate level of VRAM found on lower-end, more affordable workstation graphics cards and the extremely high price demanded by the handful of GPUs that field 48GB of VRAM or more. Additionally, laptops and advanced SFF (small form factor) workstations built around AMD Ryzen AI Max PRO processors are powerful enough to eliminate the model simplification steps engineers must sometimes take to ensure models fit within narrow memory limits.

"Simulation users tend to spend a disproportionate amount of their time cleaning geometry, fixing things, and prepping a design," Walsh says. "With the GPU performance these processors deliver, you can skip it. You can have fully defined screws, threads, and components inside your design, and we take care of that automatically. You set up your inputs and hit solve. It is just that simple."

Conclusion

For thermal and design engineering teams, the combination of high memory capacity, quick simulation speeds, achieved accuracy delivers a benefit greater than the sum of its parts. In the past, a cold plate redesign that would once have required a simplified model, a long wait for remote compute, or both, can now run in full fidelity on a workstation that fits under a desk or in a lap. Walsh frames the broader implication plainly: "This opens new opportunities for those considering AMD processor-based systems like the HP Z2 Mini G1a. They can access an enormous amount of GPU capability at an affordable price. The AMD Ryzen AI Max+ PRO 395 processor gives them real choices, so they are no longer locked into a single hardware platform."

In the past, GPU VRAM limitations and cost constraints shaped the types of simulation that were possible. Systems built with AMD Ryzen AI Max PRO processors reverse that relationship by allowing simulation requirements to inform hardware selection without spending up to five figures on a single discrete GPU. This increases customer choice, particularly when choosing a mobile or diminutive desktop workstation, and helps engineers adjust a quickly evolving product from where they’re working, whether that’s inside an office or halfway around the world.