Day 0 Support for Xiaomi MiMo-V2.5-Pro on AMD Instinct GPUs

Apr 28, 2026

We are excited to announce Day 0 support for Xiaomi MiMo-V2.5-Pro, the most capable model Xiaomi has ever built, running natively on AMD Instinct™ GPUs. This technical blog presents a Day 0 deployment walkthrough for Xiaomi’s brand new MiMo model on the AMD Instinct GPU by leveraging AMD ROCm™ 7 software and SGLang/ATOM upstream optimizations.

MiMo-V2.5 Series enters public beta on April 23, with general availability and open-source release following on April 28. As one of the first silicon partners to deliver optimized support from day one, AMD is ready for developers on launch.

What MiMo-V2.5-Pro Is

Purpose-built for the hardest problems in AI, MiMo-V2.5-Pro rivals the world's top agent models, Claude Opus 4.6 and GPT-5.4 among them; across general-purpose agent tasks, complex software engineering, and long-horizon execution. A major leap from its predecessor, the new model delivers sharper instruction-following in agentic workflows, picking up on implicit requirements in context and maintaining logical consistency over extended sessions.

Enterprise teams will find a natural fit for large-scale code generation, deep data analysis, and tight integration with agent frameworks like OpenClaw, Hermes Agent, and Claude Code.

Optimized for AMD Silicon

AMD has tuned MiMo-V2.5-Pro end-to-end on proprietary silicon, achieving high-throughput, low-latency inference that holds up under heavy workloads and million-token contexts.

- Hardware: With 288 GB on-chip memory and 8 TB/s bandwidth, AMD Instinct MI355X comfortably drives inference at MiMo-V2.5-Pro's full 1T-parameter scale and 1M-token context window.

- Software Stack: AMD ROCm software offers native compatibility with vLLM, PyTorch, SGLang, and other widely adopted AI frameworks.

- Key Optimizations: MiMo-V2.5-Pro is day-0 supported in the AMD ATOM inference engine with AITER-powered efficient kernels, along with multiple kernel fusions, FP8 KV cache optimizations, Multi-Token Prediction with an EAGLE proposer, and piecewise torch.compile. It also day-0 supports SpecV2-based multi-layer EAGLE inference with SGLang.

Deployment Guide

Run MiMo-V2.5-Pro with ATOM on AMD Instinct GPUs

Step 1: Get Started with AMD ATOM

Please use the latest pre-built upstream docker image for the MI355x GPU

docker run -d -it \

--ipc=host \

--network=host \

--privileged \

--cap-add=CAP_SYS_ADMIN \

--device=/dev/kfd \

--device=/dev/dri \

--device=/dev/mem \

--group-add video \

--cap-add=SYS_PTRACE \

--security-opt seccomp=unconfined \

--shm-size 32G \

--entrypoint "/bin/bash" \

--name mimov25pro \

aigmkt/mimo-v2.5-pro-atom:latest

Step 2: Start ATOM serving

python -m atom.entrypoints.openai_server \

--model XiaomiMiMo/MiMo-V2.5-Pro \

-tp 8\

--trust-remote-code \

--kv_cache_dtype fp8

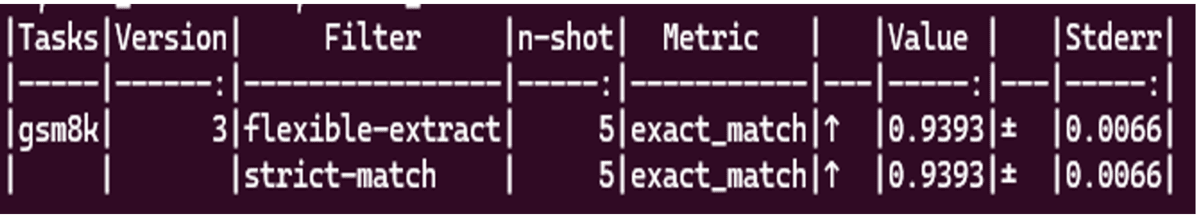

Step 3: Accuracy Evaluation with lm_eval

lm_eval --model local-completions \

--model_args model= XiaomiMiMo/MiMo-V2.5-Pro,base_url=http://localhost:8000/v1/completions,num_concurrent=64,max_retries=3,tokenized_requests=False \

--tasks gsm8k \

--num_fewshot 5

Run MiMo-v2.5-Pro with SGLang on AMD Instinct GPUs

Step 1: Get Started with SGLang

Please use the latest pre-built upstream docker image for the MI355x GPU

docker run -d -it \

--ipc=host \

--network=host \

--privileged \

--cap-add=CAP_SYS_ADMIN \

--device=/dev/kfd \

--device=/dev/dri \

--device=/dev/mem \

--group-add video \

--cap-add=SYS_PTRACE \

--security-opt seccomp=unconfined \

--shm-size 32G \

--entrypoint "/bin/bash" \

--name mimov2.5pro \

aigmkt/mimo-v2.5-pro-sglang:latest

Step 2: Start SGLang serving

export SGLANG_ENABLE_SPEC_V2=1

python3 -m sglang.launch_server \

--model-path XiaomiMiMo/MiMo-V2.5-Pro \

--tp-size 8 \

--decode-log-interval 1 \

--host 0.0.0.0 \

--port 30000 \

--trust-remote-code \

--disable-radix-cache \

--watchdog-timeout 1000000 \

--mem-fraction-static 0.8 \

--chunked-prefill-size 131072 \

--max-running-requests 64 \

--speculative-algorithm EAGLE \

--speculative-num-steps 3 \

--speculative-eagle-topk 1 \

--speculative-num-draft-tokens 4 \

--enable-multi-layer-eagle \

--attention-backend triton

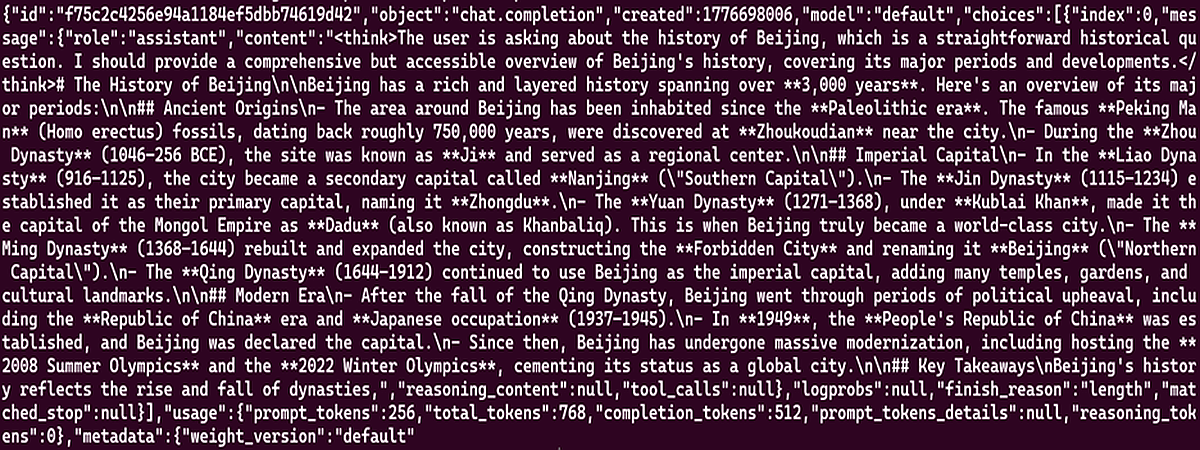

Step 3: Chat Completions API

curl http://localhost:30000/v1/chat/completions \

-H "Content-Type: application/json" \

-d '{

"messages": [{"role": "user", "content": "What is the history of Beijing?"}],

"max_tokens": 512,

"temperature": 0.7

}'

If the serve runs well, you can see the following outputs:

Acknowledgements

AMD team members who contributed to this effort: Andy Luo, Fan Wu, Bill He, Peng Sun, Carlus Huang, Lingpeng Jin, Gilbert Lei, Hattie Wu, Xicheng Feng, and the Xiaomi MiMo team.

Looking Ahead

This blog presents the Day 0 support for Xiaomi’s MiMo-V2.5-Pro model on AMD Instinct GPUs. By following this guide, you have learned how to deploy MiMo-V2.5-Pro using SGLang/ATOM to leverage advanced tool-calling capabilities for agentic workloads, as well as how to run coding and Skills-driven applications locally with this flagship model.

This enablement ensures that your development team can immediately start building robust, agent-centric platforms on the latest AMD hardware. Subsequent posts will dive deeper into kernel-level profiling, custom attention optimizations, and ongoing co-design efforts between the AMD ROCm software stack and MiMo model advancements. Stay tuned.

Get Started

- MiMo Open Platform: platform.xiaomimimo.com

- Join AMD AI Developer Program to access AMD developer cloud credits, expert support, exclusive training, and community.

- Visit the ROCm AI Developer Hub for additional tutorials, open-source projects, blogs, and other resources for AI development on AMD GPUs.

- Explore AMD ROCm Software.

- Learn more about AMD Instinct GPUs.

- Inference Image

- ATOM: aigmkt/mimo-v2.5-pro-atom:latest

- SGLang: aigmkt/mimo-v2.5-pro-sglang:latest