Reimagining AI-Native Education Through Multi-Agent Interactive Classroom on AMD ROCm

May 11, 2026

The Compute Challenge of AI-Native Education

The latest generation of online education has moved past chatbots that answer questions on the side of a static MOOC (Massive Open Online Course). While an emerging AI-Native education is built around AI from the ground up: an AI Teacher delivers a lecture on a shared whiteboard, AI Classmates debate from distinct perspectives, and a director agent decides who speaks next. The approach is already producing concrete results. At Tsinghua University, a two-month deployment of the Towards Artificial General Intelligence (TAGI) course with this Multi-Agent Interactive Classroom engaged 319 completing students; 86.3% actively interacted with the AI agents and nearly 80% of class time was spent asking questions or initiating new ideas [3]. Companion studies report high cognitive engagement and significantly higher perceived learner control than either a human-led course or a chatbot-assisted MOOC [1, 2].

The AMD University Program (AUP) has been collaborating with the Tsinghua OpenMAIC research team, the open-source reference implementation of the MAIC (Massive AI-empowered Course) framework [3], to bring this AI Platform onto AMD silicon. AUP is also drawing on OpenMAIC’s pedagogical principles to evolve the AUP Learning Cloud, which delivers ROCm-based university course materials to students and educators worldwide.

What is OpenMAIC?

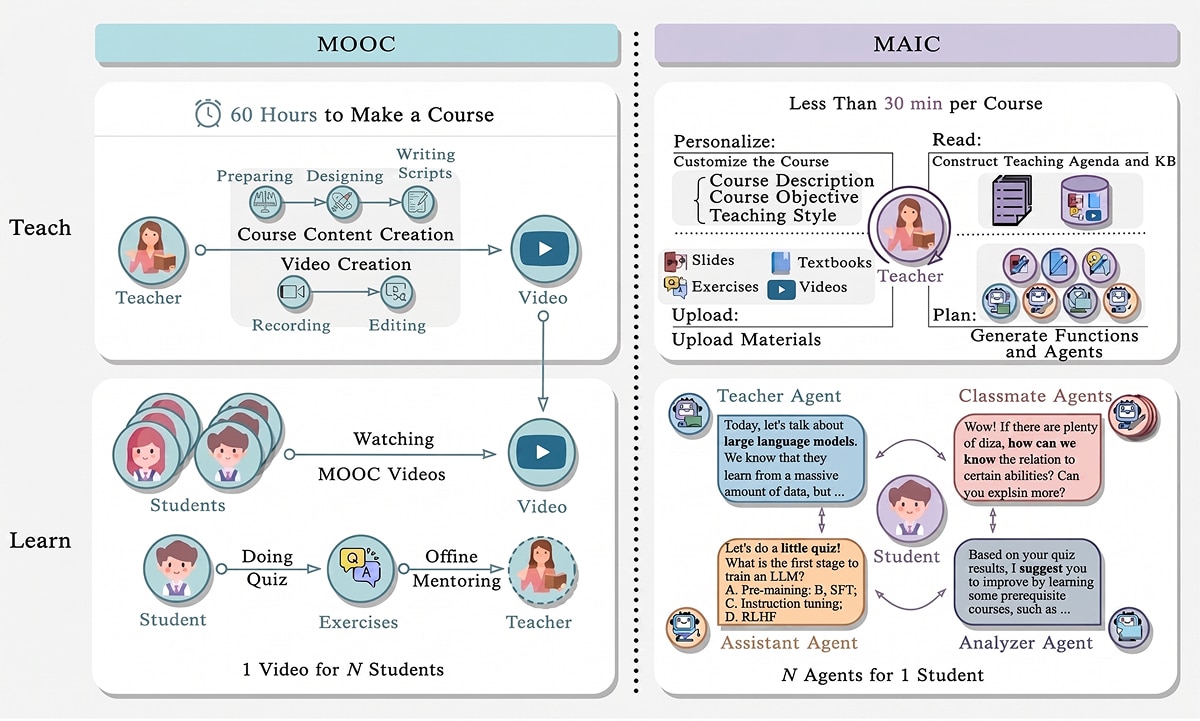

OpenMAIC (Open Multi-Agent Interactive Classroom) is the open-source implementation of the MAIC framework. MAIC reframes the online classroom around a simple inversion: where a MOOC is one video for N students, a MAIC is N agents for one student (Figure 1) [3].

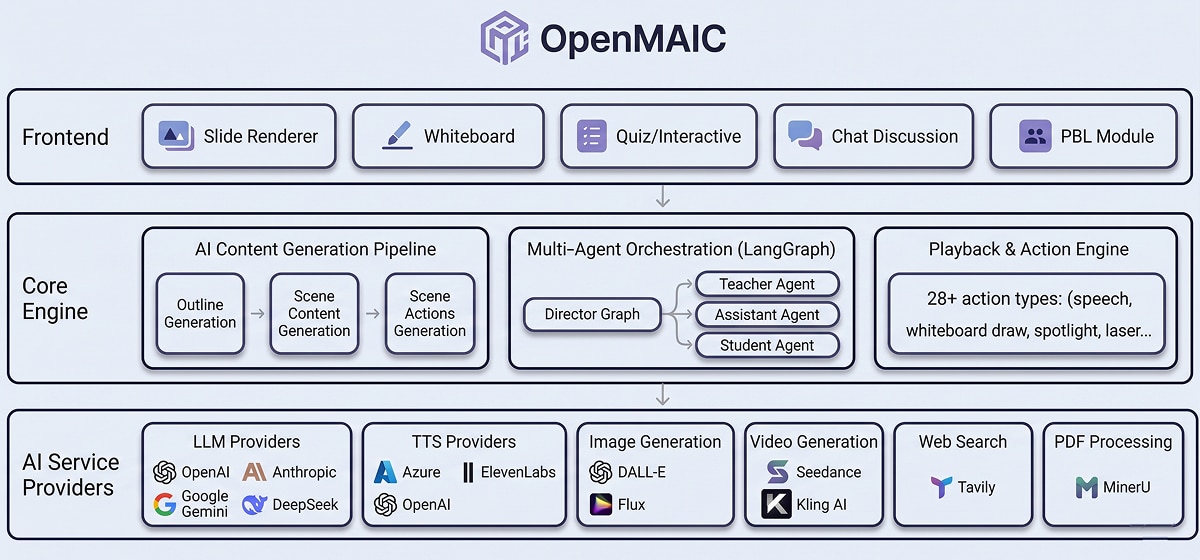

OpenMAIC turns any topic or document into an interactive classroom where AI teachers and classmates speak, draw on a shared whiteboard, and run quizzes and project-based learning around each learner. The system is a three-tier stack: a classroom Frontend, a Core Engine (content generation pipeline plus LangGraph-based multi-agent orchestration), and a pluggable AI service provider layer for LLM, speech, image, and video backends.

The MLLM (Multimodal LLM) service requirements of OpenMAIC are not arbitrary; they are derived from concrete educational sub-tasks that the Tsinghua team has each studied and published on:

- Read & Plan (course preparation). A multi-modal LLM extracts text, visuals, and a knowledge taxonomy from uploaded slides, then generates lecture scripts, quizzes, and instantiated agents. This stage is grounded in Slide2Lecture [5], which converts decks into structured teaching agendas without fine-tuning, and EduCraft [6], which automates pedagogical lecture-script generation from long-context multimodal presentations. LongWriter-V [4] further demonstrates that compact 7B vision-language models can generate ultra-long (10,000+ words), high-fidelity multimodal outputs, which is precisely what textbook-length lecture scripts demand.

- Multi-agent classroom (the live session). A LangGraph-powered Session Controller orchestrates real-time learning. The architecture follows SimClass [7], which used Flanders Interaction Analysis and Community-of-Inquiry frameworks to demonstrate that LLM-empowered agents create both teacher-student and student-student interactions that improve learning.

Edge-Cloud Collaborative Architecture on AMD ROCm

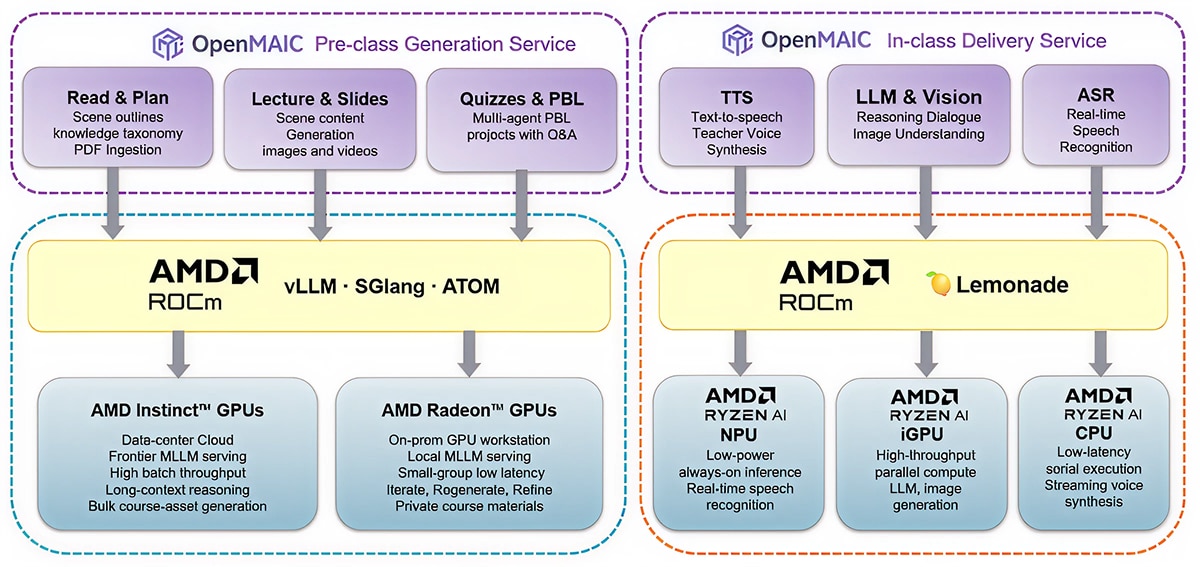

OpenMAIC’s two regimes, pre-class generation and in-class interaction, sit on opposite sides of the quality-latency-privacy space, and AMD’s full-stack GPU portfolio, from cloud AMD Instinct™ accelerators to client-side AMD Radeon™ and AMD Ryzen™ AI processors, handles both with the same software contract:

- A consolidated open software stack from cloud to edge. AMD ROCm™ runs the same open-source serving stack (vLLM, SGLang, AMD ATOM) across AMD’s full GPU portfolio, so a course can be authored on the same software contract that the rest of the AI ecosystem already uses, without re-engineering the AI service layer between cloud and local hosts.

- Data privacy by construction. Real-time student dialogue, voice, and engagement signals stay on-device. The signals never need to leave the classroom, which matters for school districts with strict data-protection rules and for university IRBs (Institutional Review Boards) that govern educational research [1].

- A natural edge-cloud split for multimodal workloads. AMD ROCm covers the heavy pre-class generation side, while AMD Ryzen™ AI plus the Lemonade local AI server cover the latency-sensitive in-class side, with both halves exposing the same OpenAI-compatible API to OpenMAIC’s pluggable provider layer.

Pre-class generation on AMD ROCm. Scene outlines, lecture scripts, quizzes, and PBL (project-based learning) projects need frontier-class reasoning and complex structured output. They run once per course and are cached as structured course assets, so they fit naturally on AMD ROCm running vLLM, SGLang, or AMD ATOM, on AMD Instinct™ GPUs at the data center tier or on AMD Radeon™ GPUs on a teacher’s workstation. AMD’s Day-0 enablement of leading open and frontier models (for example Qwen3.6, Gemma 4, Xiaomi MiMo-V2.5-Pro, and Baidu Ernie Image) means OpenMAIC can adopt new generators on AMD ROCm as soon as they ship.

In-class interaction on AMD Ryzen™ AI. Director routing, multi-agent dialog, quiz grading, ASR (Automatic Speech Recognition), and TTS (Text-to-Speech) run every turn against student speech that should not leave the device. An AMD Ryzen™ AI PC co-locates a Radeon™ iGPU (integrated GPU), an AMD XDNA™ NPU (Neural Processing Unit), and a unified memory pool, keeping an LLM, a speech model, and an image model co-resident. AMD's Lemonade local AI server ties these engines behind one API, routing each modality to its best target.

The result is a clean cloud-to-edge story on a single software stack: pre-class generation runs under ROCm on a Radeon-class workstation or Instinct-class server, while in-class interaction runs on a Lemonade server on a Ryzen AI PC. Both halves share one OpenAI-compatible API, so a course built once can be replayed in any classroom. Integration details are documented in the OpenMAIC repository.

Looking Forward: A Platform for AI Classrooms

With both halves of the platformcovered by AMD silicon and a fully open software stack, OpenMAIC becomes a natural platform for the next round of AI-Native education research and product work:

- Self-contained classroom appliance. Lemonade’s Embeddable variant enables packaging OpenMAIC as a single installable application: a turnkey AI classroom on any AMD Ryzen™ AI PC, with no separate server installations, cloud accounts, or IT expertise required. For schools without reliable internet or with strict data-privacy regulations, this is a transformative deployment model.

- Custom agents and project-based learning. OpenMAIC’s agent registry supports specialized AI Classmates, domain-specific Teaching Assistants, and new pedagogical agent types running locally. A course, a compliance training, or an internal onboarding module can be generated from an existing document in minutes, with voice narration, peer discussion, and hands-on activities. The Tsinghua team’s perception study highlights emotional resonance as a key frontier for the next generation of multi-agent classrooms [1].

Conclusion

By combining OpenMAIC's pedagogical multi-agent architecture with AMD's full GPU portfolio and the AMD ROCm Software stack, This integration initiative delivers a cloud-to-edge architecture for AI-Native education that lowers cost, preserves privacy, and matches the multimodal demands of a live classroom: heavyweight, frontier-quality course generation in the cloud, paired with low-latency, privacy-preserving multi-agent interaction at the edge.

Additional Resources

- Try the OpenMAIC live demo or visit the GitHub repository

- Visit the AMD University Program (AUP)

- Explore the AUP Learning Cloud

- Explore AMD ROCm Software

- Learn more about Lemonade

- Learn more about AMD Instinct™ GPUs

- Learn more about AMD AMD Radeon™ Professional Graphics

- Learn more about AMD Ryzen™ AI Processors

Acknowledgments

Thanks to Ji-Fan Yu, Daniel Zhang-Li, Zhe-Yuan Zhang, Yu-Cheng Wang, Danqi Zheng and the broader research and development team at Tsinghua University for pioneering the OpenMAIC framework and for their technical collaboration on the AMD integration.

References

[1] Z. Hao, F. Qin, J. Jiang, J. Cao, J. Yu, Z. Liu, and Y. Zhang, “AI as Learning Partners: Students’ Interactions and Perceptions in a Simulated Classroom with Multiple LLM-Powered Agents,” in Proc. Int. Conf. Learning Sciences (ICLS), 2025.

[2] F. Qin, Z. Hao, J. Yu, Z. Liu, and Y. Zhang, “AI Instructional Agent Improves Student’s Perceived Learner Control and Learning Outcome: Empirical Evidence from a Randomized Controlled Trial,” 2025.

[3] J,Yu, D. Zhang-Li, Z. Zhang et al. "From MOOC to MAIC: Reimagine Online Teaching and Learning Through LLM-Driven Agents." Journal of Computer Science and Technology (2026): 1-21.

[4] S. Tu, Y. Wang, D. Zhang-Li, Y. Bai, J. Yu, Y. Wu, L. Hou, H.-Q. Liu, Z. Liu, B. Xu, and J. Li, “LongWriter-V: Enabling Ultra-Long and High-Fidelity Generation in Vision-Language Models,” 2025.

[5] D. Zhang-Li, Z. Zhang, J. Yu, J. L. J. Yin, S. Tu, L. Gong, H. Wang, Z. Liu, H. Liu, L. Hou, and J. Li, “Awaking the Slides: A Tuning-Free and Knowledge-Regulated AI Tutoring System via Language Model Coordination,” 2024.

[6] Y. Wang, J. Yu, D. Zhang-Li, J. J. Y. Lim, S. Tu, H. Li, Z. Liu, H. Liu, L. Hou, J. Li, and B. Xu, “EduCraft: A System for Generating Pedagogical Lecture Scripts from Long-Context Multimodal Presentations,” 2025.

[7] Z. Zhang, D. Zhang-Li, J. Yu, L. Gong, J. Zhou, Z. Hao, J. Jiang, J. Cao, H. Liu, Z. Liu, L. Hou, and J. Li, “Simulating Classroom Education with LLM-Empowered Agents,” 2025.