Agentic AI Changes the CPU/GPU Equation

May 07, 2026

There’s a conversation happening now in a lot of infrastructure planning meetings that goes something like this: “Agentic AI is going to change the CPU-to-GPU ratio. So, we just need to add more CPUs to our GPU servers, right?”

It sounds logical. It’s also where a lot of people are getting it wrong.

The shift from chatbot-style AI to agentic AI is not just about putting a few more CPUs next to the same GPU-heavy rack design. It’s bigger than that. It’s a structural shift in data center architecture. Agentic AI is driving demand for entirely new racks of CPU servers that sit alongside GPU infrastructure and run to power the work of all these agents.

For enterprise IT leaders, there is a lesson in all this: Agentic AI rewrites the AI infrastructure equation.

At AMD, we’ve been tracking this shift closely. While we previously outlined a server CPU market growing at 18% annually, the structural increase in compute requirements driven by agents changes the math. We now expect total addressable market for server CPUs to grow at greater than 35% annually, reaching more than $120 billion by 2030.

The First Wave: Chatbot AI was Primarily Model Responses

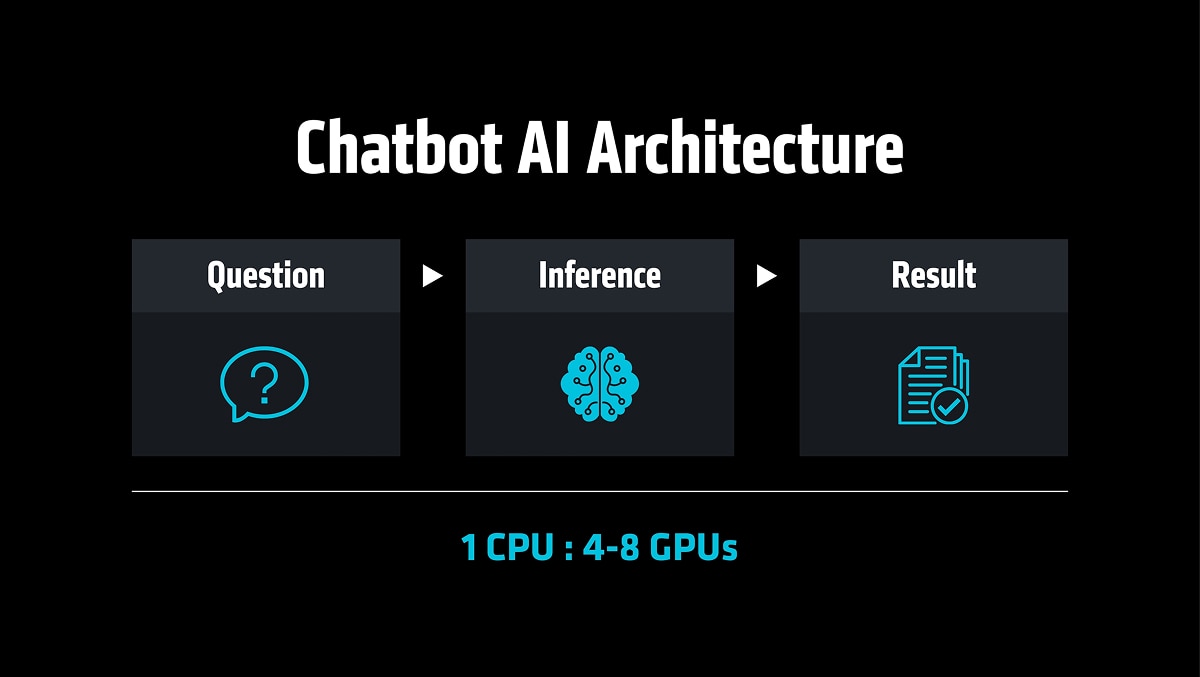

Generative AI’s first wave was built around a fairly simple pattern. A user asked a question; the application sent a prompt to a model; the model generated a response; and the application returned it.

That architecture naturally drove GPU-centric designs. In those deployments, a CPU acted as the head node for a server with four to eight GPUs. The one head node CPU handled the scheduling, I/O and system management, while the GPUs did the heavy math.

Agentic AI is Not Just ‘Chat Plus Tools’

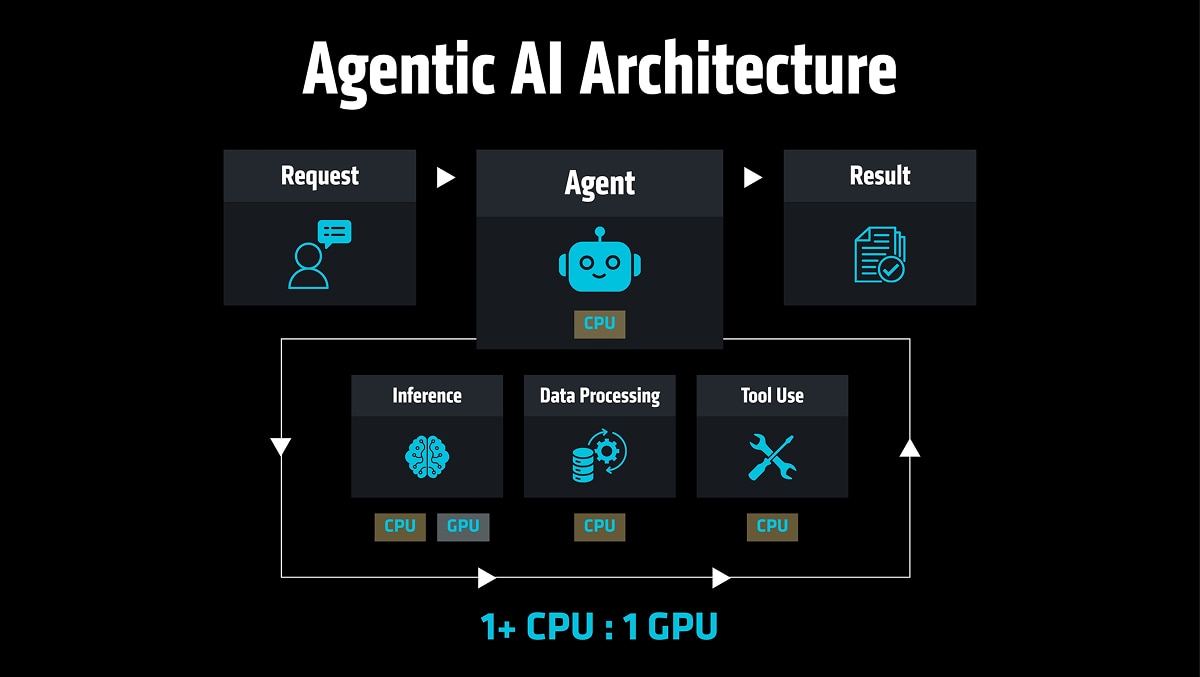

We are now in the early days of the Agentic AI Era. In it, the shape of the workload changes entirely. Instead of answering one prompt, an agent breaks a goal into steps, decides what to do next, calls multiple models, queries databases, connects with APIs, runs enterprise applications, checks permissions, retrieves memory, validates output and then loops back through the process again. It’s a very different infrastructure profile than prompt-in-answer-out chatbot AI.

GPUs are still critical for model execution, but the production workload is now CPU-intensive. CPUs are responsible for:

- Orchestration: Managing the engine that breaks down complex tasks.

- Agent Execution and Tool Calls: Triggering APIs and legacy enterprise software.

- Policy and Security: Running real-world checks on every autonomous action.

The Answer to the CPU-GPU Shift is Not Just ‘Add More CPUs’

Instead of the previous 1:4-8 CPU-to-GPU ratio with chatbot AI, we are seeing agentic AI moving toward a 1:1 ratio and, in some cases, it’s higher on the CPU side.

Here is the important part: You don’t achieve this by simply sprinkling more CPUs into a box of GPUs. You achieve it by adding a newly engineered CPU compute layer.

For enterprise IT leaders, this is where your planning needs to evolve.

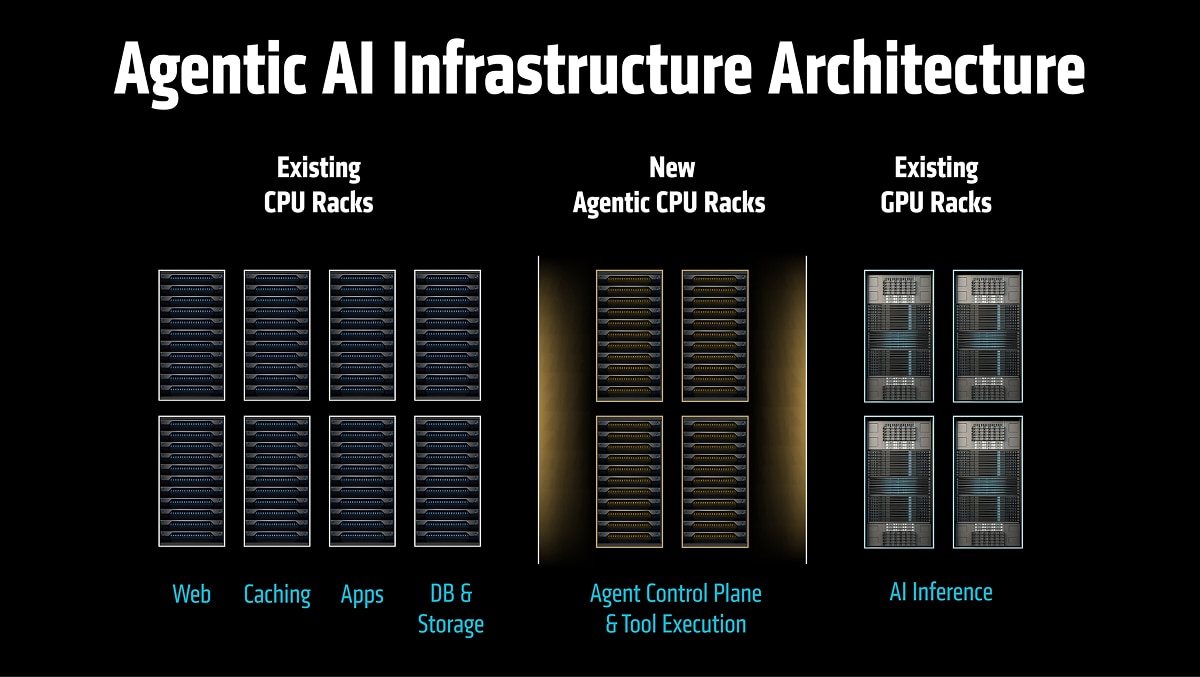

The AI system of choice for the next few years will not be a single “AI box.” It will look more like a distributed system. You’ll have GPU racks for dense model compute, fast networking and a software stack that can keep it all observable, secure and efficient. And you’ll have agentic CPU racks for orchestration, processing data and tool execution.

At this point, a balanced architecture will matter more than ever. If the CPU tier is undersized, GPUs wait. If networking is an afterthought, agents stall. If the data path is messy, latency grows. If the orchestration layer is not designed for concurrency, cost and complexity rise.

Where AMD Fits

AMD EPYC™ processors give customers a portfolio of CPU choices optimized for different parts of the AI pipeline, from high-frequency leadership for latency-sensitive work to dense-core leadership for scale-out throughput. And we continue to extend that leadership with our current roadmap, which includes the “Venice” products that will further expand the portfolio of AI-optimized CPUs. Ultimately, we are providing the specialized silicon to populate each rack in your data center (and each compute instance in your cloud environment) with exactly what it needs.

Practical Takeaway for IT Leaders

I’ll say it again, agentic AI is changing the infrastructure equation.

My request to enterprise IT decision-makers: As agentic AI moves from pilot to production, do not size infrastructure as if you were just adding a chatbot to your enterprise. Size it like you are adding a new class of digital workforce – one that needs to plan, act, check, retrieve, call tools and execute workflows all day long.

It means planning for more CPU capacity than earlier AI assumptions suggested. It means looking beyond the GPU server and thinking about racks, fabrics, software and operational balance. In the agentic era, performance will not come from one processor doing everything. It will come from the right architecture – with CPUs and GPUs working together to move AI from answers to action.

Cautionary Statement

This blog contains forward-looking statements concerning Advanced Micro Devices, Inc. (AMD) such as the expected growth of the total addressable market for server CPUs, which are made pursuant to the Safe Harbor provisions of the Private Securities Litigation Reform Act of 1995. Forward-looking statements are commonly identified by words such as "would," "may," "expects," "believes," "plans," "intends," "projects" and other terms with similar meaning. Investors are cautioned that the forward-looking statements in this presentation are based on current beliefs, assumptions and expectations, speak only as of the date of this presentation and involve risks and uncertainties that could cause actual results to differ materially from current expectations. Such statements are subject to certain known and unknown risks and uncertainties, many of which are difficult to predict and generally beyond AMD's control, that could cause actual results and other future events to differ materially from those expressed in, or implied or projected by, the forward-looking information and statements. Investors are urged to review in detail the risks and uncertainties in AMD’s Securities and Exchange Commission filings, including but not limited to AMD’s most recent reports on Forms 10-K and 10-Q.

AMD does not assume, and hereby disclaims, any obligation to update forward-looking statements made in this presentation, except as may be required by law.