Powering the Engines of the Modern Studio: AMD at NAB 2026

Apr 17, 2026

The 2026 NAB Show runs April 18-22 in Las Vegas, bringing together industry professionals, technology companies, software developers, and content creators interested in the latest hardware and software advances. One over-arching theme of the show this year (or, at least, an acknowledged trend), is that the demand for high-quality, personalized content is scaling exponentially, but production budgets and timelines are not. More platforms, more regions, more audiences, and more formats require more finished work, delivered faster, with fewer hand-offs between the people and systems producing it. The desire to create high quality films and television shows for wide audiences and less money is, of course, nothing new, but modern content creators must deal with a fragmented media landscape that bears little resemblance to the distribution channels of decades past.

AMD has helped develop some of the technologies now reshaping the larger industry and we're glad to be at NAB 2026 to celebrate the wider industry with our peers and partners. A number of AMD employees and partners will speak throughout the event, including John Canning (PreVis to VirProd in Realtime - Latest Tips and Tricks by the Experts and How to Use AI and Keep Your Creative Edge), photographer, content creator, filmmaker Travis Keyes (Built for the Moment: Live Capture to Delivery), and DGA director AJ Bleyer (Creators and the Post Production Process).

Virtual Production Grows Up

In 2019, AMD technology helped underpin some of the first LED volume deployments used on The Mandalorian. At the time, replacing large portions of on-location shooting with real-time rendered virtual production still carried genuine risk. Virtual production requires tight coordination between compute, rendering engines, and production teams, with all of them operating on timelines that left little room for error.

ILM's StageCraft work and the wider industry adoption that followed that had a genuine impact on both the feel of the story and its special effects. Real-time environments on LED walls let filmmakers capture complex shots entirely on camera, substantially reducing the need for on-location work. LED volumes have continued to mature over the past seven years, and adoption has grown to encompass companies you probably don't have on your bingo card of most likely adoptees.

Clorox, unlike, say, ILM, is not a pioneering VFX studio with a tentpole budget. It manages a portfolio of brands spanning dozens of product lines and hundreds of SKUs, each with its own retail requirements and audience targets. Clorox didn't need alien landscapes or striking starship bridges nearly as much as it needed a way to create affordable, tailored content across a vast range of products and brands.

Working with Qube Cinema and Triality Studios, Clorox deployed an LED volume workflow built around AMD EPYC processors (Ryzen Threadripper PRO 7000 WX-Series) and Radeon AI PRO (R9700) hardware running Unreal Engine. The results drive home what democratizing content production can deliver in a practical, real-world example. A standard two-day location shoot that previously yielded two finished commercials now produces approximately fourteen. Long-time industry constraints tied to physical locations or the expense of on-location shooting have been alleviated, shifting potential bottlenecks from post-production to how quickly teams can plan, render, and iterate on their own designs and concepts.

Aaron Behman, senior product marketing manager at AMD, will speak from stage in a joint presentation with Clorox and Qube Cinema at 2:50 on April 21 in the W2457 VideoNext theater. Planned topics include the success of Clorox's foray into LED volumes and the company's future plans to explore running generative AI workloads on the same AMD infrastructure that powered its initial virtual production exploration.

Accelerating Post and Delivery

That same pressure for speed extends into post-production. To that end, AMD has worked with Adobe to add support for the AVX-512 instruction set into Photoshop, improving performance and responsiveness across a range of filters and processing toolchains.

AMD also supported Adobe's new Color Mode color editor, a new addition to Adobe Premiere Pro intended to make sophisticated color editing accessible to video editors who might find themselves overwhelmed by the complex tools used by professional colorists, yet underwhelmed by hobbyist-grade alternatives.

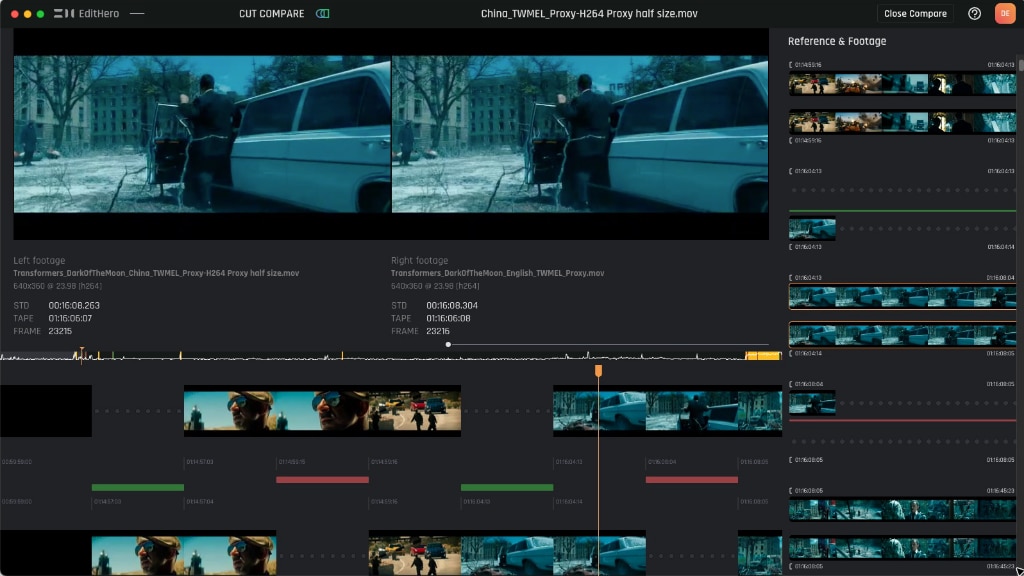

Tools like EditHero reflect a similar interest in delivering AI assistance for editors who need to move quickly without sacrificing professional quality. At NAB 2026, EditHero is leaning into what they call "Version Intelligence," defined as the ability to instantly compare and understand what changed between two different versions of the same content.

Under the hood, EditHero uses perceptual hashing to generate a fingerprint of every frame, then combines it with machine learning-based matching to align sequences across formats, resolutions, and reframes without needing timecodes or metadata.

Such frame-level analysis is computationally intensive, which is why EditHero has chosen to build their platform around AMD Ryzen Threadripper™ PRO processors. New capabilities EditHero is announcing at the show this year include shot-based comparison results for clearer editorial alignment, high-level change comparison across both video and audio timelines, spectrogram-based audio heatmaps, intelligent change-type grouping, and a comments and tagging system that supports filtering and reporting. The throughput demands that AMD hardware delivers at the compute level are increasingly matched by software tools meant to collapse the gap between rough cut and finished delivery.

On the delivery side of the equation, broadcasters are actively replacing racks of single-purpose encoding appliances with software-defined architectures that can be reconfigured as workloads change. Mediakind's MK.IO Beam platform runs on 5th Gen AMD EPYC processors, consolidating encoding, transcoding, and multiplexing onto commercial off-the-shelf servers and reducing physical headend footprint by as much as 75 percent. The same stack can handle 4K contribution at a stadium edge or scale into public cloud infrastructure without requiring a redesign of the underlying control logic. Software like MK.IO simplifies the a single asset may need to travel simultaneously across live broadcast, on-demand platforms, international feeds, and personalized versions, all with deterministic, low-latency performance. If you'd like to learn more, Chris Bellaci has written a deeper dive into MK.IO, available here.

At the Pinnacle of VFX

Even as these efficiencies scale across enterprise advertising and broadcast infrastructure, AMD has continued to invest in multiple stages of the visual effects pipeline. A recently signed Memorandum of Understanding between AMD and Wētā FX signals that commitment, with joint exploration of next-generation tools aimed at optimizing complex VFX pipelines and accelerating emerging volumetric workflows. That work represents the other end of the same spectrum. The same underlying compute capabilities that let Clorox shoot a dozen commercials in a weekend are, in more concentrated form, the technology pushing the boundary of what was visually possible at all.

The Advanced Imaging Society recognized this sustained commitment to the craft last year by honoring AMD executive Jack Huynh with a Lumiere Award, effectively acknowledging AMD as both a technology vendor and a working partner to many companies. AMD silicon shows up inside cameras, large-scale VFX pipelines, LED volume stages, post-production, and broadcast headends. The form factor may change depending on the use case, but the underlying commitments to compute density, flexible deployments, mobile and desktop workstations, and tight integration with the software tools industry professionals already use does not.

It may seem unexpected to find the future of media production illustrated by a company shooting supermarket product commercials. But this waterfall process is exactly what a mature, scalable, ecosystem looks like. Infrastructure that once powered a prestige Star Wars series is now available to brands that need a dozen or so ad spots finished by Monday. The NAB Show's focus on bringing people together from so many different areas of content creation always surfaces this type of interesting trend, and we hope to see you at the show.