-

- Name

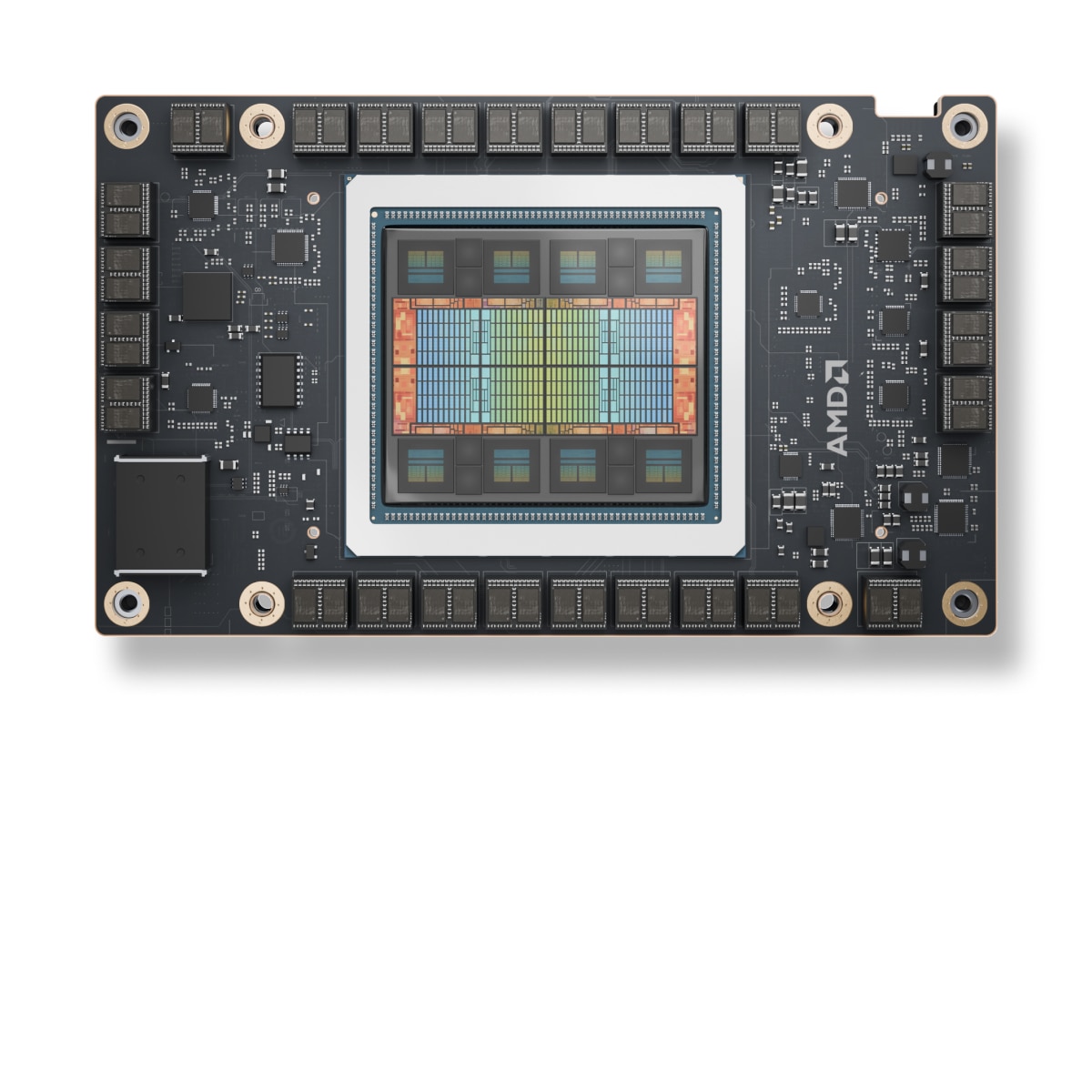

- AMD Instinct™ MI325X

- Family

- Instinct

- Series

- Instinct MI300 Series

- Form Factor

- Servers

- Launch Date

- 10/10/2024

-

- GPU Architecture

- CDNA3

- Lithography

- TSMC 5nm | 6nm FinFET

- Stream Processors

- 19,456

- Matrix Cores

- 1216

- Compute Units

- 304

- Peak Engine Clock

- 2100 MHz

- Peak Eight-bit Precision (FP8) Performance (E5M2, E4M3)

- 2.61 PFLOPs

- Peak Eight-bit Precision (FP8) Performance with Structured Sparsity (E5M2, E4M3)

- 5.22 PFLOPs

- Peak Half Precision (FP16) Performance

- 1.3 PFLOPs

- Peak Half Precision (FP16) Performance with Structured Sparsity

- 2.61 PFLOPs

- Peak Single Precision (TF32 Matrix) Performance

- 653.7 TFLOPs

- Peak Single Precision (TF32) Performance with Structured Sparsity

- 1.3 PFLOPs

- Peak Single Precision Matrix (FP32) Performance

- 163.4 TFLOPs

- Peak Single Precision (FP32) Performance

- 163.4 TFLOPs

- Peak Double Precision Matrix (FP64) Performance

- 163.4 TFLOPs

- Peak Double Precision (FP64) Performance

- 81.7 TFLOPs

- Peak INT8 Performance

- 2.6 POPs

- Peak INT8 Performance with Structured Sparsity

- 5.22 POPs

- Peak bfloat16

- 1.3 PFLOPs

- Peak bfloat16 with Strutured Sparsity

- 2.61 PFLOPs

- Transistor Count

- 153 Billion

-

- External Power Connectors

- 54V UBB

- Typical Board Power (TBP)

- 1000W Peak

-

- Last Level Cache (LLC)

- 256 MB

- Dedicated Memory Size

- 256 GB

- Dedicated Memory Type

- HBM3E

- Infinity Cache

- Yes

- Memory Interface

- 8192-bit

- Memory Clock

- 6 GHz

- Peak Memory Bandwidth

- 6 TB/s

- Memory ECC Support

- Yes (Full-Chip)

-

- GPU Form Factor

- OAM Module

- Bus Type

- PCIe® 5.0 x16

- Infinity Fabric™ Links

- 8

- Peak Infinity Fabric™ Link Bandwidth

- 128 GB/s

- Cooling

- Passive OAM

-

- Supported Technologies

- AMD CDNA™ 3 Architecture , AMD ROCm™ - Ecosystem without Borders , AMD Infinity Architecture

- RAS Support

- Yes

- Page Retirement

- Yes

- Page Avoidance

- Yes

- SR-IOV

- Yes

AMD Instinct™ MI325X Accelerators

AMD Instinct™ MI325X GPU accelerators set new AI performance standards, delivering incredible performance and efficiency for training and inference.