From Silicon to Cloud: AMD on AWS Essentials for IT Leaders

Mar 31, 2026

After nearly three decades as a hands-on technical contributor, including time at AWS, I recently joined AMD, and I'll admit that even with all my experience, the sheer technical depth of the AMD sales and engineering briefings was enough to make my head spin. If you've ever sat through one, you know exactly what I mean.

It's well worth the effort to try to decode some of those deep technical concepts into a more approachable, plain-English explanation explicitly designed for IT leaders and technically curious decision-makers who want better to understand the role of AMD in the AWS cloud ecosystem. Here's my attempt.

The AMD Journey to the Cloud with AWS

Let's start with a bit of context. AMD was founded in Sunnyvale, California in 1969, and its early work was in memory chips and semiconductors. It wasn't until 1975 that AMD launched its first CPUs, the AM9080 and AM2900, setting the stage for where the company is today.

Fast-forward to 2018, when AWS and AMD began working together to offer customers more choice and outstanding value in cloud computing. Since 2018, AWS has launched instances with all generations of EPYC CPUs spanning general purpose, memory-optimized, compute-optimized, burstable, and HPC instance families.

AMD EPYC Server CPUs aren't just limited to AWS. They're available across all major public clouds, and AMD is fully invested in both cloud and on-premises workloads, helping customers meet their goals with efficient, high-performance compute options.

What AMD Offers on AWS Today

- AWS 5a Instance Families (m5a, c5a, r5a)

These were the first AMD CPU-powered instances on AWS. While they may still be slightly cheaper in some cases, they're considered legacy options, best for a balanced mix of compute, memory, and networking where you don’t need bleeding-edge single-thread performance or for balanced performance with a lower spend.

- AWS t3a (Burstable) Instance Families

This is the only burstable AMD CPU-powered instance family. They're low-cost and optimized for workloads with variable CPU demand, delivering consistent baseline performance with the ability to burst when needed. They're great for low-usage workloads, rather than for steady or high-performance needs. To learn more about how burstable instances work, please visit here.

- AWS 6a Instance Families (m6a, c6a, r6a)

6a is the AWS instance generation before 7a, built on AMD EPYC Server CPUs and offering excellent value. They're around 10% less expensive than similar x86 options, and in some regions (like Mumbai), up to 45% less expensive.

- AWS 7a Instance Families (m7a, c7a, r7a)

These instances are powered by 4th Gen AMD EPYC Server CPUs and deliver up to 50% higher performance compared to the previous M6a generation.1 Organizations evaluating these instances often focus on improved price-performance and workload consolidation. For example, Pinterest shared at AWS re:Invent 2025 that migrating workloads from c5.9xlarge to m7a.4xlarge instances resulted in approximately 35–40% price-performance improvement based on internal analysis.2

- AWS 8a Instance Families (m8a, c8a, r8a)

The latest addition to M instance family, m8a instances are powered by 5th Generation AMD EPYC 9005 Server CPUs with a maximum frequency of 4.5GHz. You can expect up to 30% higher performance and up to 19% better price performance compared to M7a instances. They also provide higher memory bandwidth, improved networking and storage throughput, and flexible configuration options for a broad set of general-purpose workloads.

A Closer Look at More AMD Technical Concepts

Let's walk through some of the most beneficial AMD technologies.

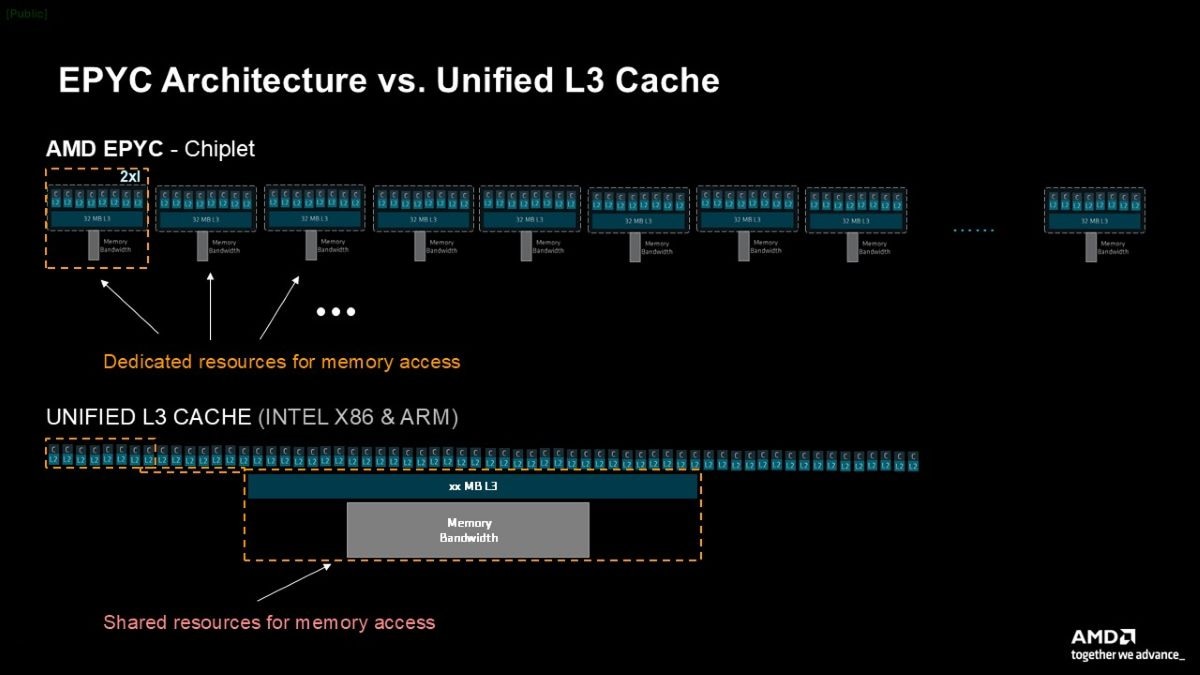

Chiplet Architecture: Modular, Efficient, and Smart

Unlike traditional CPUs built as one large block (a monolithic design), AMD EPYC Server CPUs are made up of smaller, modular components called chiplets. Each chiplet has its own dedicated L3 cache and memory bus and contains eight cores on 7a instances. This matters because the design offers robust isolation from "noisy neighbors" in shared environments like EC2. If one instance hogs resources, it only affects its chiplet, not the whole CPU. It's a design that leads to highly consistent performance for your workloads, especially in virtualized environments.

CPU Cache Hierarchy: L1, L2, and L3

When your CPU needs data, it looks in its cache before going to main memory (which is slower). AMD CPUs use three levels of cache:

- L1 (64KB per core): It's the fastest and smallest and stores immediate instructions.

- L2 (1MB per core): It stores recent or predicted data.

- L3 (32MB per chiplet): It's shared among cores in a chiplet and is larger but slower.

Together, this cache system boosts performance by reducing the CPU's need to access RAM.

Transparent Secure Memory Encryption (TSME)

TSME encrypts all data in main memory without any app or OS changes. Most systems encrypt data at rest (like EBS volumes), but data in RAM is often left unencrypted. With TSME, however, your data is protected in memory; it's always on in AMD CPU-powered EC2 instances, and it's transparent, no configuration needed. That's especially valuable in multi-tenant environments like clouds.

AMD Secure Encrypted Virtualization (SEV) with Secure Nested Paging

AMD SEV provides full memory isolation at the hardware level. While AWS also offers Nitro Enclaves (software-based security), SEV is built into the CPU. Some things to know: It's only available on 6a instances today, must be enabled at launch, adds a 10% cost premium, can't be turned off later, and doesn't support hibernation or Nitro Enclaves. Overall, however, Secure Nested Paging offers an extra layer of protection from hypervisor-level access, even by AWS itself.

Simultaneous Multi-Threading (SMT)

SMT allows one CPU core to run two threads simultaneously. This keeps the core busy even if a thread is stalled.

- 6a instances have SMT enabled

- 7a instances have SMT disabled

There is, however, a tradeoff. For workloads like BYOL (bring your own license) Windows Server on dedicated hosts, SMT impacts the number of licenses you need. For example:

- r6a (with SMT): 96 physical cores → 192 vCPUs → ~$40,626 in licenses

- r7a (no SMT): 192 physical cores → 192 vCPUs → ~$81,252 in licenses

It's the same vCPU count but double the licensing cost.

(If you'd like SMT support in newer 7a instances, AMD wants to hear from you! Reach out at AWS@AMD.com.)

Advanced Instructions: AVX-512, VNNI, and bfloat16

AMD EPYC CPUs support several powerful instruction sets to speed up modern workloads:

- AVX-512 performs many calculations at once, making it ideal for AI, data analytics, and HPC.

- VNNI optimizes AI inference for use cases such as image recognition and natural language processing.

- bfloat16 is a 16-bit floating point format for deep learning that cuts memory use in half while maintaining range. It provides faster, more efficient ML training and inference.

In short: If you're working with AI, ML, or big data, these instruction sets will help you get your work done faster and more cost-effectively.

Wrapping It All Up

Here's a quick summary of what I've covered:

- AMD CPU-powered EC2 instances offer competitive pricing and impressive performance.

- 7a instances deliver up to 50% higher performance compared to the previous M6a generation.1 Chiplet architecture helps noisy neighbor effects and supports more consistent performance.

- SME and SNP give your data an extra layer of protection.

- Advanced instructions make AMD powered instances ideal for AI and machine learning.

- SMT status matters for licensing and performance optimization.

Don’t Go It Alone

Whether you're modernizing, downsizing, or simply looking for cost efficiency, AMD offers tools and expertise to guide the way. The AMD EPYC Advisory Suite can help you identify the right EC2 instance for your workload, and AMD works closely with AWS partners to deliver tailored solutions. Many organizations combine several strategies across different workloads for maximum ROI.

If you need help deciding what fits your architecture best, AMD and its partners are ready to assist. To offer feedback on this post or to suggest future topics, feel free to reach out at AWS@AMD.com.

©2026 Advanced Micro Devices, Inc. All rights reserved. AMD, the AMD arrow, AMD EPYC™, and combinations thereof, are trademarks of Advanced Micro Devices, Inc. Other product names used in this publication are for identification purposes only and may be trademarks of their respective companies. Supported features may vary by operating system. Please confirm with the system manufacturer for specific features. No technology or product can be completely secure.

Footnotes

- AWS, Amazon EC2 M7a Instances

- AWS, re: Invent 2025

- AWS, Amazon EC2 M7a Instances

- AWS, re: Invent 2025